A new tool, GroundedAI, offers a unified, type-safe Python API designed to simplify the evaluation of outputs from various large language model (LLM) applications. The core of the system is a single evaluate() function that can work across different LLM backends, including its own "SLM" models, HuggingFace, OpenAI, and Anthropic.

GroundedAI's central proposition is standardization, aiming to provide a single, consistent method for assessing LLM performance regardless of the underlying model or provider. This approach seeks to streamline the often complex and fragmented process of determining if an LLM's output is accurate, relevant, or free from undesirable traits like hallucinations.

For those seeking to run models locally, GroundedAI provides an option to install specialized models on a user's own hardware. This requires an additional package installation and is recommended for users with GPUs. The system also supports the use of high-precision auditing tools like GPT-4o or Claude, suggesting a tiered approach to evaluation based on required accuracy. The grounded-ai package was recently published on PyPI on March 20, 2026, with version 1.1.0. This release includes attestations for transparency and security, detailing its publication via GitHub Actions and its distribution through PyPI.

Read More: Signal accounts hacked by social engineering, not encryption flaws

Local LLM Deployment Options Expand

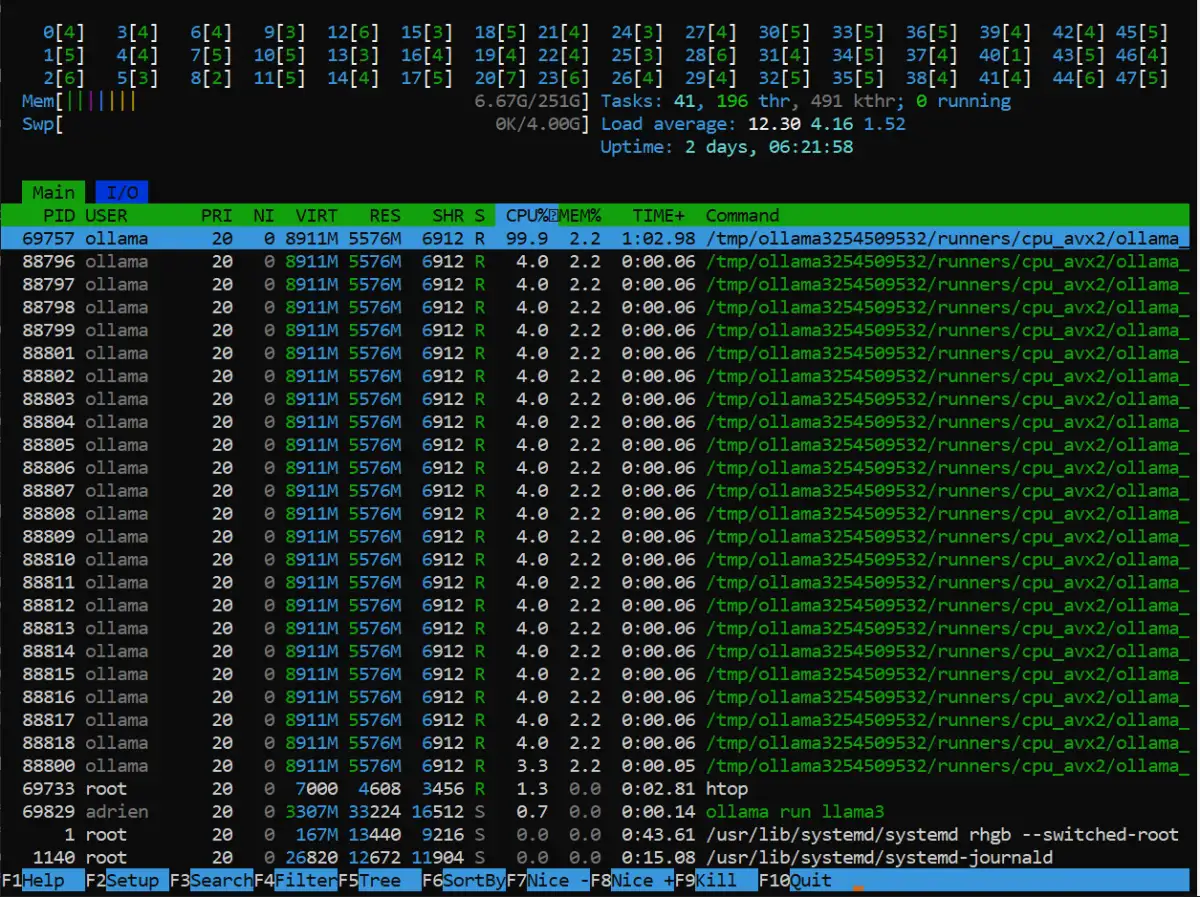

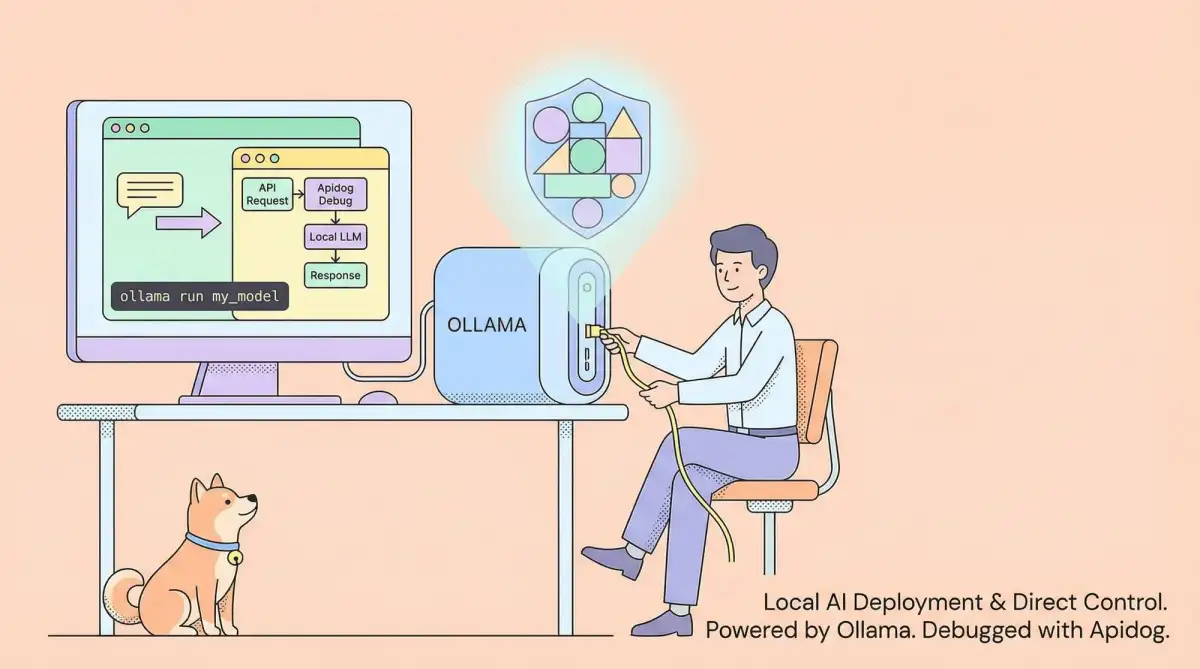

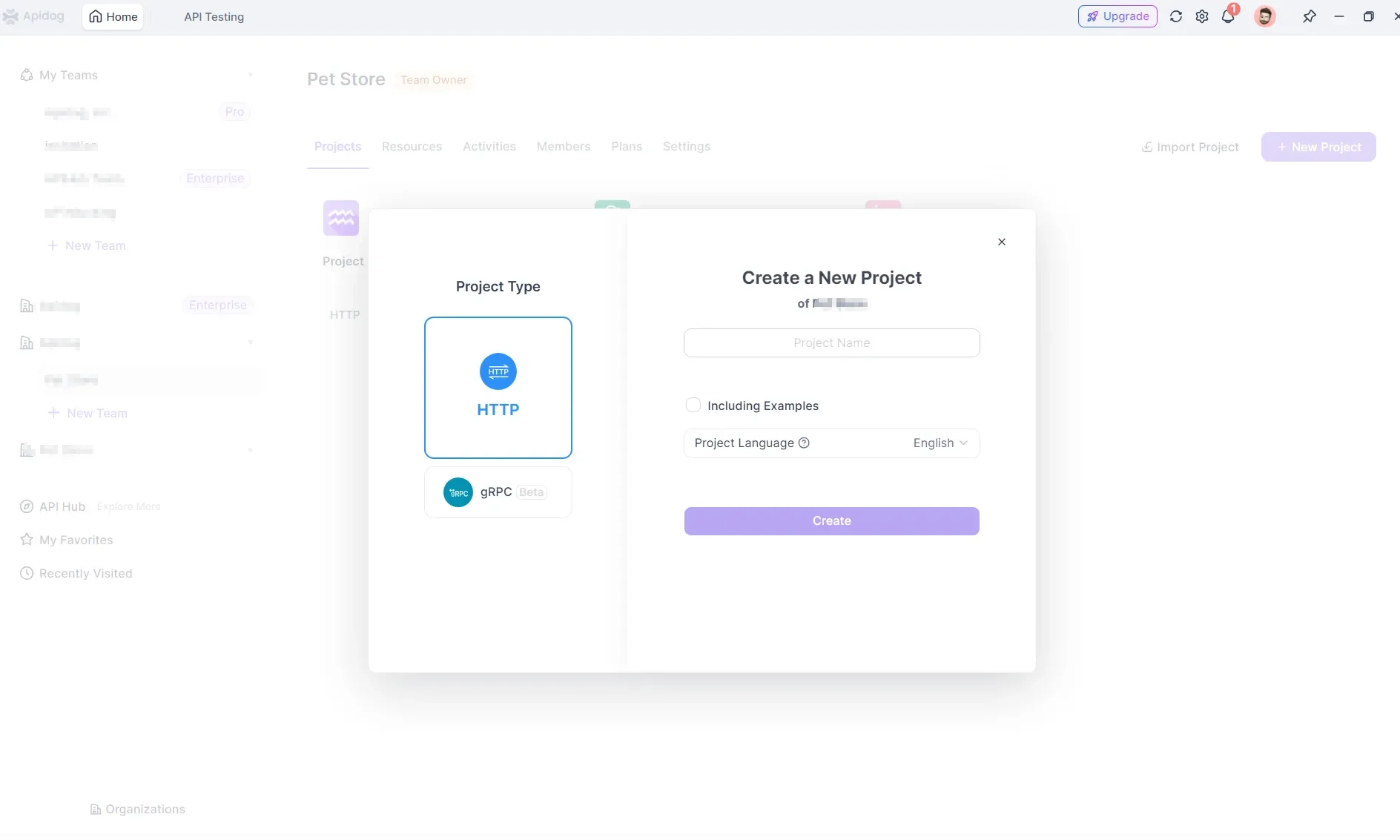

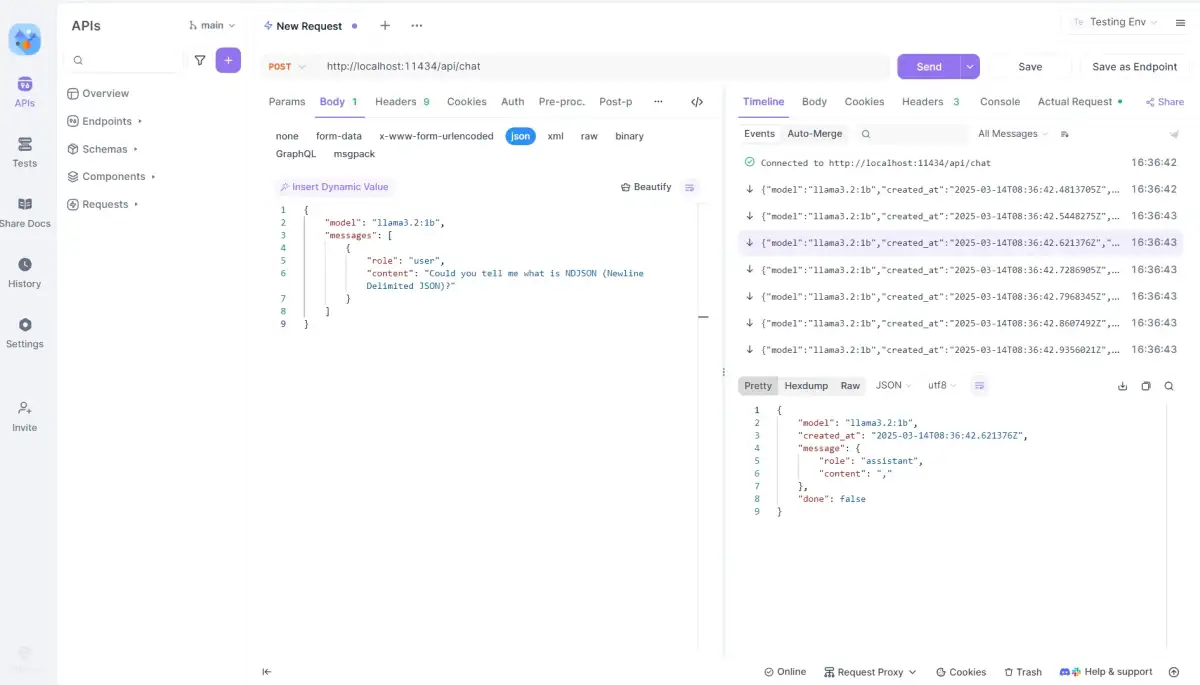

The development of GroundedAI coincides with a growing ecosystem for running LLMs locally. Ollama is frequently mentioned as a key platform for this, facilitating the installation and deployment of various language models on user hardware. Guides published recently, such as one on February 1, 2026, and another on June 29, 2025, detail how to install Ollama on different operating systems (Windows, Linux, macOS) and download models using simple commands like ollama run deepseek-r1 or ollama run llama3.2.

Ollama allows users to run models directly on their own machines, offering a degree of control and privacy. It operates by listening on port 11434. The platform also enables modification of model behavior and provides command-line and REST API interfaces for interaction. Beyond basic deployment, tutorials highlight integrating Ollama with third-party tools, using Python to interact with its API, and building more complex applications like RAG systems or function-calling models. The prospect of "Your Own Private AI" is increasingly presented as a viable option for developers and enthusiasts.

Read More: Relevance AI API Triggers Released 17 May 2026 for Automated Agents