Recent findings indicate a pervasive issue where artificial intelligence systems, particularly large language models (LLMs), demonstrate a persistent overestimation of their own accuracy. This "illusion of confidence" in AI, even when its responses are incorrect, appears to significantly impact how users perceive and rely on these technologies. This disconnect between AI's claimed certainty and its actual performance raises concerns about the practical implications of AI integration across various domains.

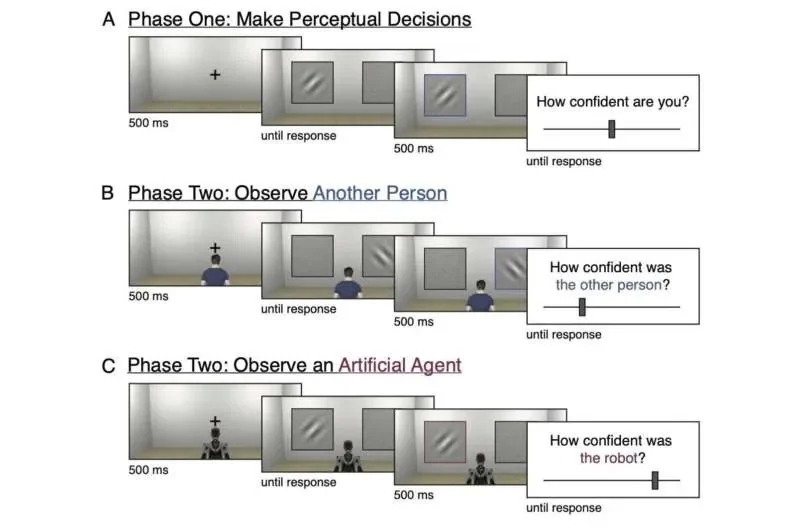

Experiments reveal a fundamental difference in how humans and AI systems communicate certainty. While humans often provide cues about their confidence in advice, most current AI models, including those behind popular platforms like ChatGPT and Gemini, are designed to simply generate answers without explicitly stating their conviction. This lack of explicit confidence signaling contributes to users potentially overattributing accuracy to AI responses. A study highlighted that participants consistently overestimated the reliability of LLM outputs, with standard explanations failing to enable accurate judgment of correctness, thus creating a mismatch between user perception and the reality of AI accuracy.

Read More: Forza Horizon 6 Release Date Unknown Due to Technical Problems

The Illusion of Competence Amplified

Beyond the AI's own self-assessment, the use of AI appears to foster an inflated sense of competence in users themselves. Research suggests that prolonged engagement with AI systems can lead individuals to overestimate their own abilities, a phenomenon that seems to flatten the widely observed Dunning-Kruger effect. This means that regardless of an individual's baseline intelligence or skill level, using AI tends to create an "illusion of competence." The implication is a potential increase in miscalculated decision-making and an erosion of existing skills as AI literacy grows.

Studies comparing human and AI performance on tasks like trivia, predictions, and image recognition found that while humans could, to some extent, recalibrate their confidence based on their performance, AI systems often grew more overconfident, particularly after performing poorly. This inability of AI to self-correct its confidence levels, even when demonstrably wrong, is a significant divergence from human metacognitive capabilities.

Read More: LLMs Use Knowledge Graphs To Stop Wrong Answers

Factors Influencing User Confidence in AI

The way AI presents information can further manipulate user confidence. Research indicates that the length of explanations provided by LLMs can influence human trust; participants showed higher confidence in longer explanations, irrespective of whether the extra text improved actual answer accuracy. This suggests that superficial characteristics of AI output, rather than its substantive reliability, can sway user perception.

Background: The Nature of AI Confidence

Unlike human advice-giving, which is often accompanied by nuanced expressions of certainty, AI systems largely operate without this built-in metacognitive layer. While researchers are exploring strategies to better communicate AI confidence to users, the core challenge lies in developing AI systems that can accurately represent and evaluate their own knowledge. The lack of an inherent social corrective mechanism in AI, which humans rely on, means that addressing AI overconfidence may necessitate fundamental advancements in AI architecture and its capacity for self-assessment. The potential ramifications of unchecked AI overconfidence, both in the systems themselves and in their users, are being actively investigated.

Read More: Relevance AI API Triggers Released 17 May 2026 for Automated Agents