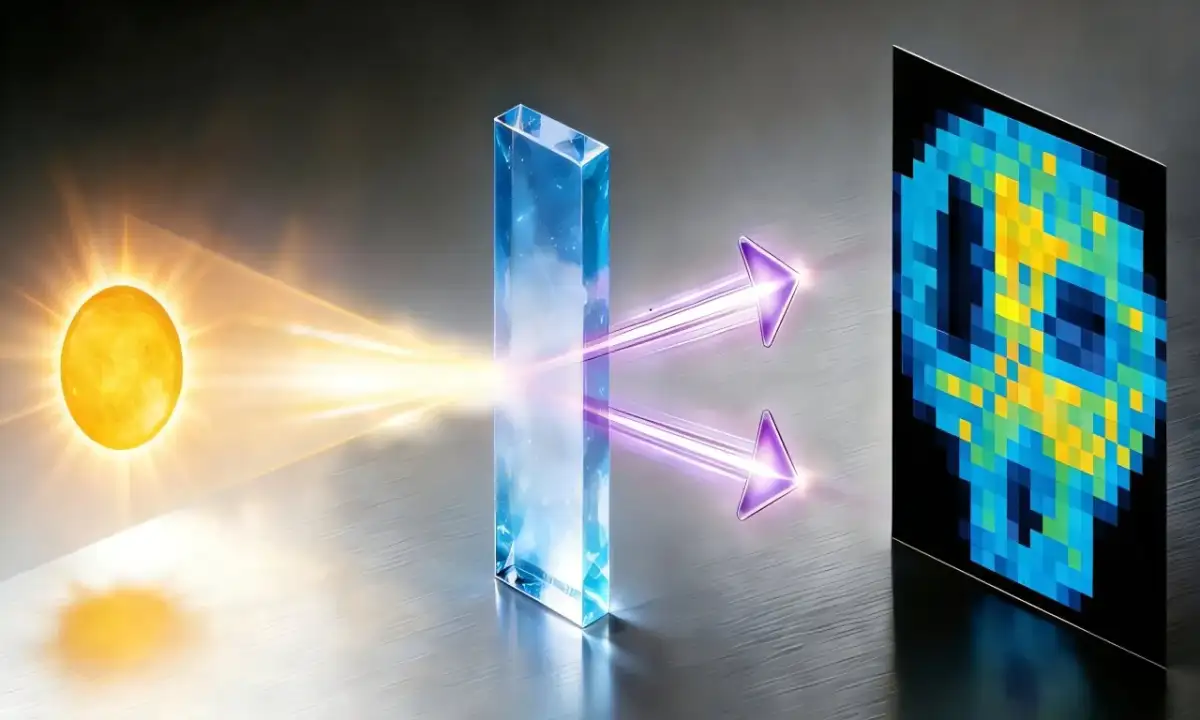

Machines are no longer merely witnessing the world; they are interpreting it without asking permission from the center. Edge AI shifts the labor of calculation from distant, centralized server farms directly into the circuits of local hardware—cameras, industrial sensors, and handheld tools. This untethering means data is crunched at the point of origin, stripping away the lag of a round-trip journey to the cloud. By running AI algorithms on-device, these tools make choices in milliseconds, bypassing the need for constant, high-speed network tethers.

"Edge AI refers to the deployment of artificial intelligence algorithms and AI models directly on local edge devices… enabling real-time data processing and analysis without constant reliance on cloud infrastructure." — IBM

Local Logic vs. Centralized Commands

The technical divide rests on where the "thinking" happens. While Cloud AI demands a thick pipe of bandwidth to move raw data to a remote facility, Edge AI works in the dirt and the dark of the local circuit. This creates a functional hierarchy:

| Feature | Cloud AI | Edge AI |

|---|---|---|

| Decision Site | Distant Data Centers | Local Device (Sensor/Camera) |

| Latency | High (waiting for signal) | Low (real-time response) |

| Bandwidth | High demand (raw data transfer) | Low demand (only insights sent) |

| Power Needs | High (transmission is costly) | Optimized (via AI accelerators) |

| Reliability | Depends on network uptime | Operates offline |

The Hardware of Autonomy

The transition relies on specialized silicon and software layers that can handle the heavy math of Machine Learning without melting the battery.

Read More: Superlinked Co-Founder Wants Simpler AI Tools

AI Accelerators: Hardware like Google’s Edge TPU are designed for lean power consumption.

On-Device Inference: Existing CPUs in machines are now being tasked with speech control for factory floors and lighting.

Software Infrastructure: Frameworks now exist to manage and update these models across thousands of disconnected devices simultaneously.

Real-time utility is the primary driver for this shift. In Autonomous Vehicles or Augmented Reality (AR), a half-second delay in "understanding" a visual prompt results in failure or physical harm. By moving the model to the camera itself, the system reacts to the world as it happens, not as it is reported.

The Fragmented Network

Despite the push for local intelligence, these devices do not exist in a vacuum. 5G networks act as a skeletal support, providing the intermittent high-speed bursts needed to update the models that the devices carry.

Voice Control: Speech recognition happens at the lamp or the lathe, not in a server rack three states away.

Maintenance: Sensors on heavy machinery predict their own breakdowns by spotting vibration patterns in the raw noise of the gears.

Privacy: Data stays within the physical casing of the device, reducing the surface area for interception during transit.

Background: The End of the Simple Sensor

For a decade, the Internet of Things (IoT) was a system of passive observers. Sensors gathered temperatures, movements, and sounds, then blindly pushed that data "up" to the cloud for someone else to make sense of it. This created a bottleneck. As the number of devices grew, the networks became choked with "noise." Edge AI is the response to this congestion. It is an admission that the center cannot hold every bit of information. By turning the Sensor into a Processor, the industry is attempting to solve the bandwidth crisis by making the "things" smart enough to know what data is worth keeping and what is merely static.