Anthropic has significantly increased usage limits for its Claude Code offering, doubling the existing five-hour rate limits across various subscription tiers. This move follows Anthropic's acquisition of all compute capacity from SpaceX's Colossus 1 data center, a deal providing access to over 300 megawatts of power and an estimated 220,000 NVIDIA GPUs, including advanced H100, H200, and GB200 accelerators. The expanded capacity is expected to support more intensive usage patterns, particularly for developers engaging with Claude's more advanced models.

The core of this development is Anthropic's securing of substantial new compute infrastructure, directly enabling them to relax previous usage restrictions on their AI coding assistant.

The increased limits apply to Pro, Max, Team, and seat-based Enterprise plans. This enhancement is a direct consequence of the newly secured SpaceX compute capacity, which Anthropic stated has been integrated within the month. The agreement with SpaceX signifies a major step in Anthropic's ongoing strategy to scale its AI infrastructure, following previous large-scale compute deals with tech giants like Microsoft Azure, Amazon Web Services (AWS), and Google Cloud. These prior commitments collectively represent tens of billions of dollars in planned spending on AI infrastructure.

Read More: New LLM Serving Method Faster Than Old Way

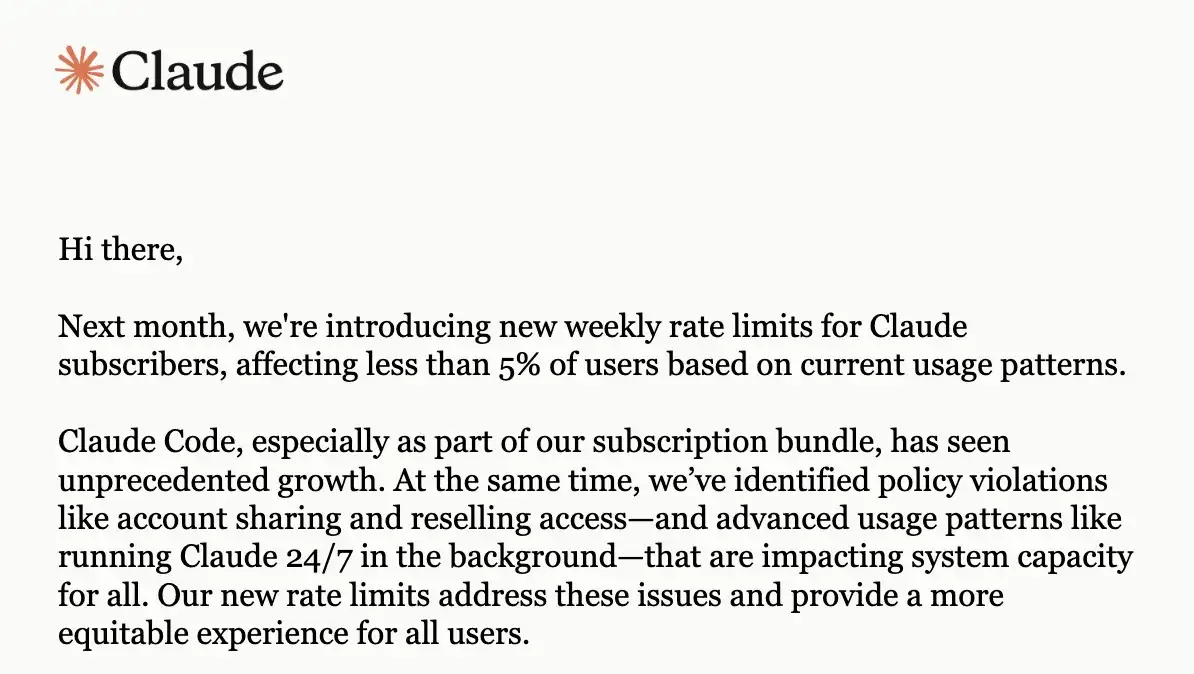

Previous limitations on Claude Code, including a five-hour reset window and weekly quotas for different models like Claude Opus 4, had spurred discussions among developers. Some noted that the exploding usage of Claude Code was straining infrastructure, prompting a need for more robust solutions or, in some cases, a move towards self-hosting. The introduction of unified usage limits across all access points—whether direct via Claude.ai or through platforms like AWS Bedrock, Google Vertex AI, or Microsoft Foundry—had also been a point of consideration for users tracking their token consumption and costs.

The expanded rate limits are positioned as a response to the growing demand and the enhanced capabilities afforded by the SpaceX partnership. This move aims to accommodate heavier usage without resorting to the standard API pay-as-you-go model for exceeding established quotas, offering a more seamless experience for dedicated users.

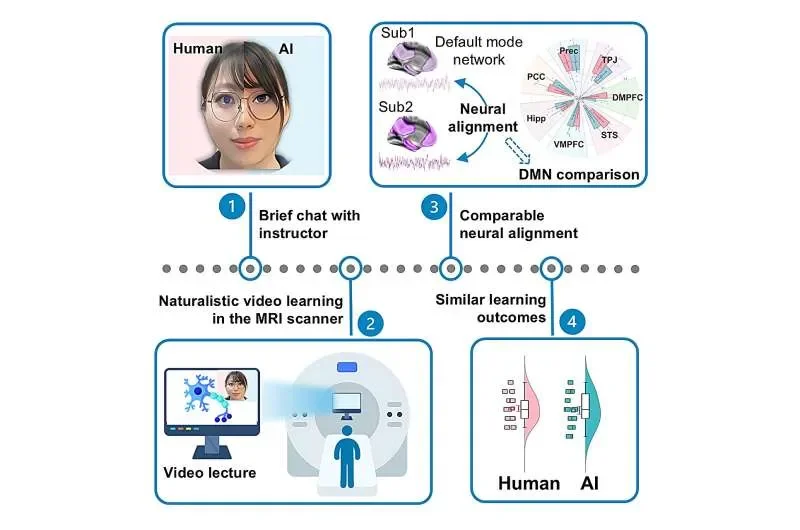

Read More: AI Pre-Lecture Chat Matches Human Teaching for Student Learning