New developments in large language models (LLMs) are pushing the boundaries of capability, with some systems now incorporating 'natively multimodal' features and boasting significantly expanded context windows. However, this rapid advancement is accompanied by ongoing efforts to address foundational concerns like factual accuracy, computational costs, and security vulnerabilities.

Gemini and Llama 4 Lead Multimodal Push

Google's 'Gemini' family of models, particularly 'Gemini Ultra,' is positioned for complex tasks, while 'Gemini Nano' targets on-device applications. Access for developers and enterprises began in December 2023. Meta's 'Llama 4' models, including 'Scout' and 'Maverick,' launched in April 2025, introduce a 'mixture of experts' (MoE) architecture. These 'Llama 4' models are noted for their multimodal capabilities and unprecedented context length support, with 'Llama 4 Scout' showing strong performance across coding, reasoning, and image benchmarks. These models are available via their respective APIs, with some, like 'Llama 4 Scout,' accessible on platforms like Hugging Face.

Read More: Motorola Razr 60 Ultra and 60: New Foldables with Bigger Batteries

Addressing LLM Weaknesses

Recent research highlights attempts to mitigate LLM limitations. The 'HalluHunter' framework, for instance, uses knowledge graphs to expose factual errors in at least nine LLMs. Defense mechanisms against 'prompt extraction attacks' are also being developed, as seen with the 'ProxyPrompt' system. Furthermore, 'Carbon-Taxed Transformers' propose a compression pipeline to improve LLM efficiency, evaluated on various coding and text datasets. Efforts to optimize LLM 'red-teaming' for long-context models are also underway with 'FlashRT.'

Evolving Model Architectures and Costs

The landscape of LLMs is marked by continuous updates and diverse architectures. Models vary in their design, with 'Llama 4' models being the first open-weight natively multimodal offerings from Meta built on MoE. This contrasts with other models that may be optimized for specific uses, such as large-scale analysis or enterprise applications.

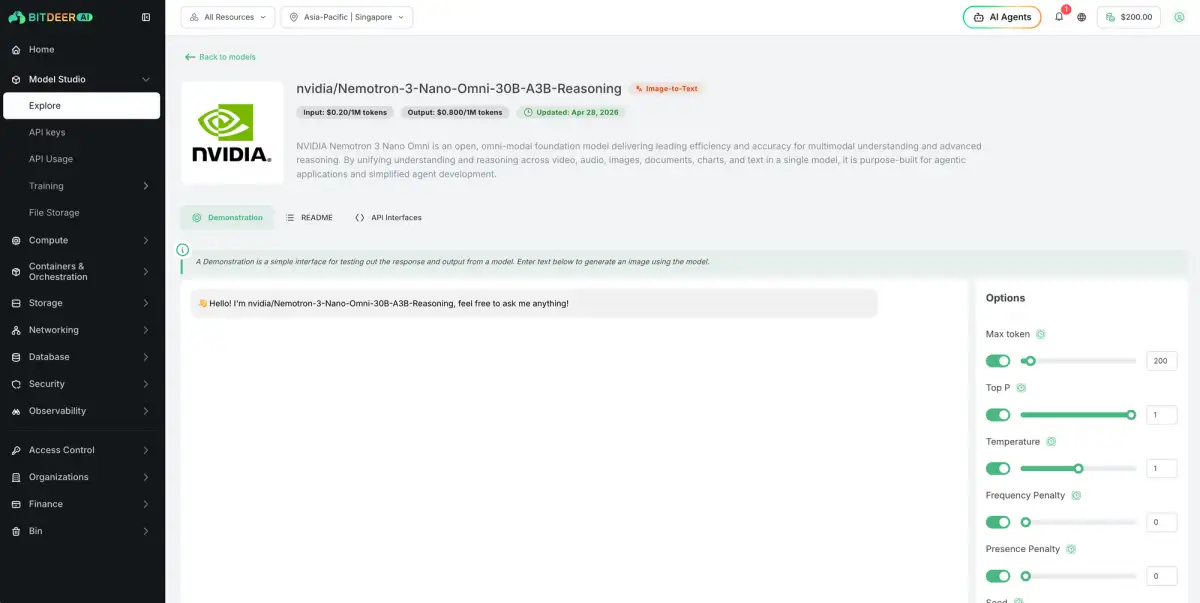

Read More: NVIDIA Nemotron 3 Nano Omni: New AI Model Understands Vision, Audio, Language

Token costs for API access remain a fluctuating factor, with providers like Claude and Llama showing varied pricing structures for input and output tokens. These costs are subject to frequent adjustments as models are updated. For users requiring the analysis of extensive datasets or lengthy documents, models offering larger context windows, such as 'Gemini 2.5,' are particularly relevant. However, simplified access through platforms like ChatGPT or Copilot, which are built upon LLMs, is also common.

Knowledge Cut-offs and Model Versions

The 'knowledge cut-off dates' for various LLMs, a crucial metric for understanding their real-time information capabilities, are tracked across different model families including GPT, Claude, Gemini, and Llama. For instance, OpenAI's GPT models have seen numerous preview and updated versions released throughout 2024, with some specific versions having knowledge cut-off dates noted as late as October 2024. Similarly, Claude models distinguish between 'reliable knowledge cut-off' and 'training data cut-off,' with specific dates varying across their 'Haiku,' 'Sonnet,' and 'Opus' lines, some extending into mid-2024.

Read More: Sky Sports and Audi Use New Tech to Show Racing Data on Screen

This report synthesizes information from multiple sources, published between October 2025 and "yesterday" (May 2, 2026), reflecting the dynamic nature of large language model development and analysis.