NVIDIA has introduced Nemotron 3 Nano Omni, a novel open multimodal AI model. This development consolidates vision, audio, and language processing into a single architecture. The company asserts this unification allows AI agents to operate with greater speed and improved reasoning across various data types, including video, audio, images, and text.

Nemotron 3 Nano Omni achieves multimodal reasoning within a single, efficient model, aiming to overcome the contextual and temporal inefficiencies of systems relying on separate specialized models for different data types.

The model is positioned as a component within broader agentic systems, capable of augmenting proprietary cloud models or other Nemotron variants like Nemotron 3 Super and Ultra. Its application spans tasks such as computer interaction, document analysis, and audio-visual comprehension. NVIDIA claims the model sets a new benchmark for efficiency and accuracy in open multimodal models, topping multiple leaderboards for tasks like complex document intelligence and video/audio understanding.

Read More: Meta gets space solar power for AI data centers

Open Weights and Customization

The Nemotron family, including the new Omni variant, is offered with 'open weights, training data, and recipes'. This approach aims to grant businesses and developers flexibility and control over deployment, particularly for those with strict data sovereignty or regulatory requirements. Companies can deploy these models within environments subject to specific data localization or compliance needs.

Technical Details and Performance

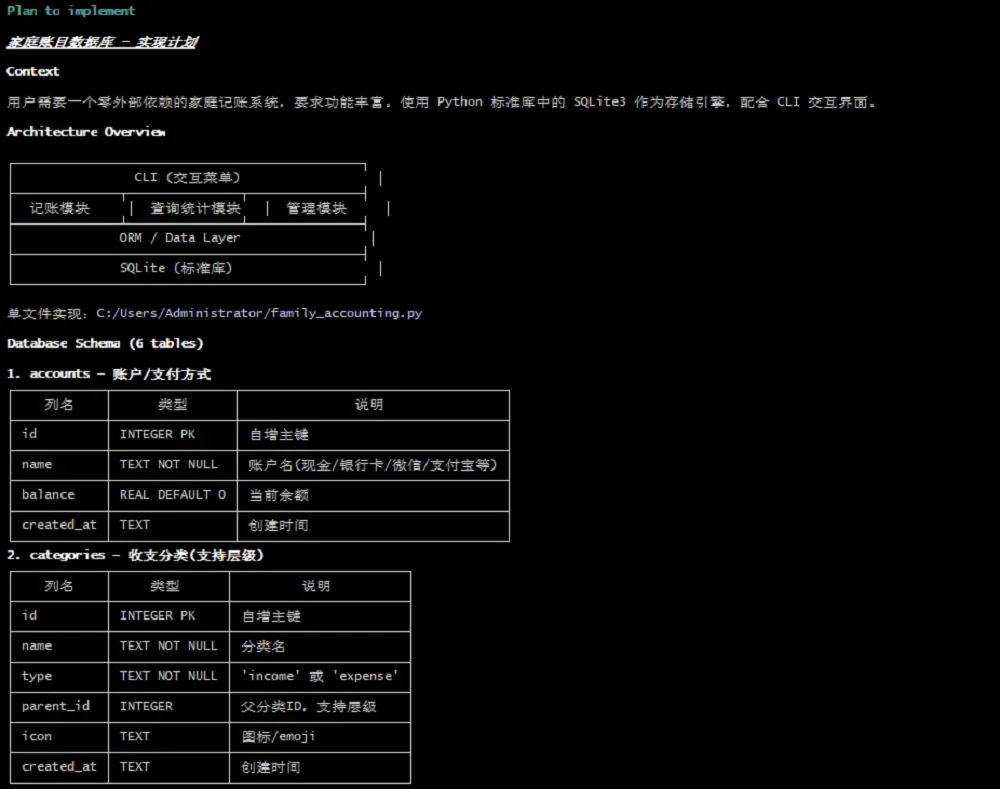

Nemotron 3 Nano Omni boasts a 30 billion parameter structure, with an emphasis on efficiency through an architecture that reportedly utilizes around 3 billion active parameters per inference. It employs a unified encoder-projector-decoder design. The model has been benchmarked against leading proprietary and open models, reportedly outperforming others in various multimodal reasoning domains. NVIDIA has also made available several checkpoints for the model, including BF16, FP8, and NVFP4 formats, accessible via platforms like Hugging Face.

Availability and Partnerships

The model's availability extends to various cloud inference platforms, including FriendliAI, Bitdeer AI Cloud, GMI Cloud, and Crusoe Managed Inference, facilitating production-scale deployments. NVIDIA is also positioning Nemotron 3 Nano Omni as a key element in its strategy for edge AI, advocating for smaller, efficient open models running on NVIDIA hardware.

Read More: Xiaomi AI Models MiMo-V2.5-Pro Offer Top Performance Cheaper

Background and Context

NVIDIA frames Nemotron 3 Nano Omni as an evolution from its earlier Nemotron Nano V2 VL model, offering significant improvements in visual capabilities and introducing entirely new audio and video+audio processing functions. This release also signifies NVIDIA's continued push into providing not only the hardware infrastructure for AI but also the foundational models that run on it. The broader Nemotron 3 family, which includes models designed for more demanding workloads, has seen substantial adoption, with over 50 million downloads reported for the series over the past year.