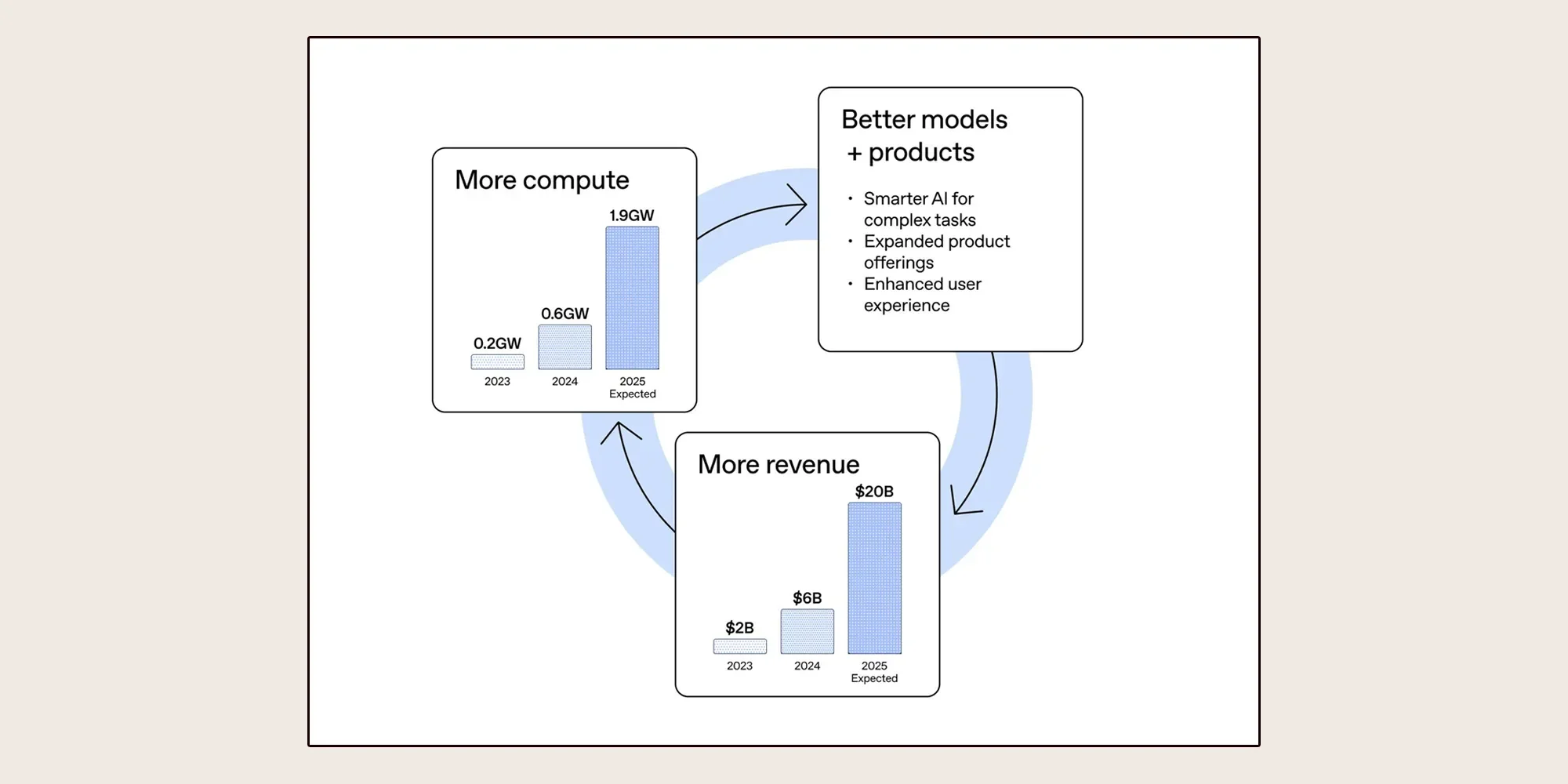

OpenAI's declared capacity for computing power, the bedrock of its advanced artificial intelligence development, is reportedly a critical constraint, forcing the company to shelve certain initiatives. This limitation on compute resources is compelling OpenAI to make deliberate strategic choices, diverting its focus toward its primary, revenue-generating AI products. While the company asserts its revenue growth parallels its infrastructure expansion, the CFO’s statements indicate a constant struggle to keep pace with an insatiable demand for processing capabilities.

This scarcity has led to tangible shifts in project prioritization. The video app Sora, once a promising venture, has been discontinued. This decision aligns with a broader strategy to concentrate resources on core AI products deemed more likely to yield financial returns, particularly in the lead-up to a potential initial public offering. Similar pressures appear to be affecting other major players in the AI landscape. Anthropic, for instance, has implemented stricter usage limits on its Claude model during peak usage times, a clear signal of the widespread demand exceeding available computational resources.

Read More: Artemis II toilet fixed after fault on April 1, 2024, allowing mission to continue

OpenAI’s financial chief, Sarah Friar, has been vocal about these challenges, frequently referencing the company's continuous need for greater compute. She has articulated a vision where the scaling of compute is intrinsically linked to profitability. Unlike established technology giants with diverse revenue streams, OpenAI does not possess the same fallback mechanisms should its significant AI infrastructure investments fail to materialize as expected. This reality underscores the precarious position of AI companies that operate on the cutting edge without a broad, pre-existing financial base to absorb potential setbacks.

The company’s operational strategy appears to involve a dual approach to compute acquisition and utilization. OpenAI is actively pursuing diverse data center partnerships globally, including significant agreements in Australia and South Korea, and exploring potential sites in India and Japan. This diversification aims to secure a more robust and reliable supply of computing power. Simultaneously, OpenAI is adopting a tiered approach to resource deployment: employing the latest, highest-performance hardware for the most demanding tasks of training frontier models, while leveraging lower-cost infrastructure for high-volume, efficiency-focused workloads.

Read More: New 1-Bit AI Model Bonsai 8B Runs on Phones, Uses Less Energy

The financial implications of this compute-driven model are significant. OpenAI has reported hitting substantial revenue milestones, including achieving its first billion-dollar month and tripling revenue over a single year. Friar has stressed that this revenue growth is directly proportional to the company's AI infrastructure capacity. To meet the anticipated surge in demand, driven by advancements like ChatGPT-5, the company anticipates colossal expenditures, potentially reaching trillions of dollars, primarily for data center development.

Read More: Google Gemma 3 Models Now Support Images and Text for Developers in May 2025

Background: The Compute Imperative in AI's Ascent

The relentless demand for computing power is a defining characteristic of the current era of artificial intelligence development. As AI models grow in complexity and scale, so too does their appetite for the specialized hardware—primarily GPUs—required for training and deployment. Companies like OpenAI, at the forefront of this technological wave, face a constant battle to secure sufficient computational resources. This struggle is not merely about scaling; it is a fundamental bottleneck that dictates the pace of innovation and the ability to capitalize on nascent market opportunities.

The race for compute power has become a geopolitical and economic focal point. Nations and corporations are investing heavily in building out their AI infrastructure, recognizing its critical role in future economic competitiveness. For OpenAI, which operates outside the traditional corporate structure with its own massive hardware investments, the challenge is multifaceted. It involves not only securing access to cutting-edge chips and data center space but also optimizing the use of these resources to drive profitability and maintain a competitive edge in a rapidly evolving market. The company's recent strategic shifts, including discontinuing certain projects and exploring new revenue models like advertising on ChatGPT, reflect this intense pressure to balance ambitious research and development with the practical realities of compute limitations and the imperative to demonstrate financial viability.

Read More: Groq LPU Uses SRAM for Faster AI Inference, Nvidia Responds