Tiny Model, Big Claims

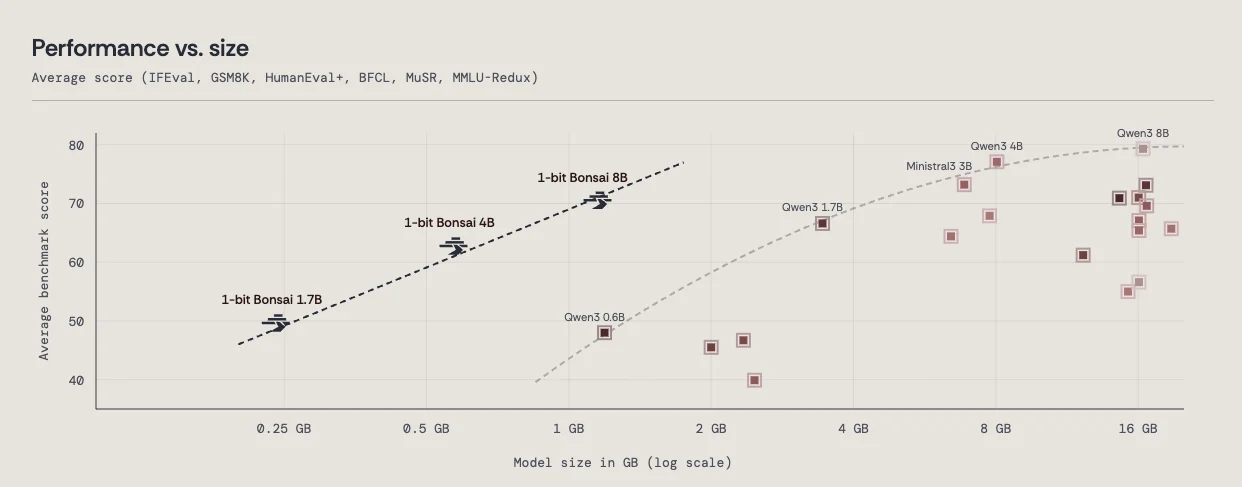

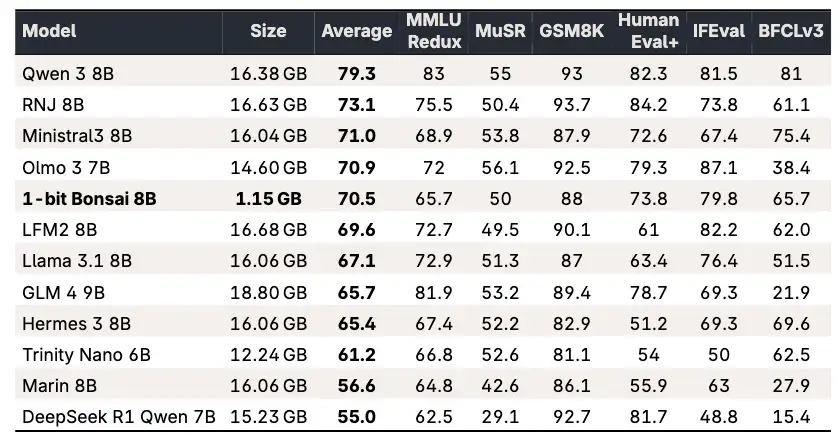

PrismML, a nascent AI entity birthed from research at Caltech, has surfaced with Bonsai 8B, a purported "first commercially viable 1-bit large language model." This offering stands dramatically apart from established models by drastically shrinking its memory footprint. The Bonsai 8B model, boasting 8.2 billion parameters, occupies a mere 1.15 gigabytes. This is a stark contrast to typical 16-bit 8-billion parameter models, which usually require around 16 gigabytes and are consequently unsuitable for many consumer devices.

The company asserts that Bonsai 8B achieves competitive performance on various benchmarks when juxtaposed against leading 16-bit 8B models, such as Llama 3 8B. Further claims point to an 8x speed improvement and 4-5x reduction in energy consumption on edge hardware, presenting a significant shift for the deployment of advanced AI. This efficiency, PrismML suggests, enables sophisticated AI applications to operate directly on devices like smartphones and laptops, lessening the dependency on cloud infrastructure.

Read More: CodeInspector tool automates student code grading as of May 2026

Shrinking the Digital Footprint

PrismML's approach centers on the concept of a 1-bit model, where each parameter is reduced to a single bit, representing values such as {-1, 0, +1}. This fundamental reduction in data representation is the primary driver behind the dramatically smaller file size. The company has also released smaller variants: Bonsai 4B (0.5GB) and Bonsai 1.7B (0.24GB), which reportedly run on devices like an M4 Pro Mac and an iPhone, respectively. For instance, Bonsai 8B is cited to operate at approximately 44 tokens per second on an iPhone 17 Pro Max, a speed deemed adequate for real-time interaction.

"PrismML underlines that these gains originate predominantly from the reduction in memory usage, not yet from the complete exploitation of the 1-bit structure during inference."

While PrismML emphasizes the technical innovation, some commentary notes that the significant efficiency gains observed thus far are largely attributed to memory reduction, with the full potential of the 1-bit inference process perhaps yet to be realized.

Read More: AI Assistant Changes How It Answers Questions

Emerging from the Shadows

The launch of Bonsai arrives amidst a landscape of escalating AI investments, notably OpenAI's recent substantial funding round. PrismML, however, positions itself as a contender focused on a different vector: decentralized, efficient AI. The Bonsai models are made available under an open-source Apache 2.0 license. The company, supported by entities including Khosla Ventures and Google, is emerging from a period of operational stealth to challenge the prevailing trend of increasingly massive AI models requiring extensive data center resources.

"As AI models grow larger and more computationally intensive, deploying advanced intelligence has increasingly required massive datacenter infrastructure."

This statement from PrismML frames their work as a direct response to the escalating demands and costs associated with current AI deployment paradigms. Their research stems from work conducted at Caltech, led by Babak Hassibi, who also serves as PrismML's CEO and co-founder.