Nvidia's anticipated Rubin GPU architecture, slated to propel the next wave of AI infrastructure, may encounter delays and reduced initial deployment volumes due to persistent supply chain constraints, specifically concerning High Bandwidth Memory 4 (HBM4). Reports suggest that mass production targets for the Rubin platform have been significantly revised downwards, with some forecasts indicating a potential halving of the initial shipment estimates for Vera Rubin AI server racks from 12,000-14,000 units to approximately 6,000. This situation, while impacting the immediate rollout of cutting-edge AI hardware, is framed by industry watchers as a temporary bottleneck rather than a fundamental derailment of Nvidia's AI ambitions.

The primary obstacle identified is the limited availability and qualification challenges associated with HBM4 memory, a critical component for the Rubin GPU's enhanced performance. While Nvidia has reportedly secured its advanced packaging capacity, the memory supply chain appears to be the salient weak point. This bottleneck is not unique to Rubin; industry analysis indicates that even shipments of existing Hopper GPUs destined for markets like China might see reduced volumes due to geopolitical considerations. Nevertheless, the company is expected to maintain revenue momentum, with substantial shipments of its current Blackwell architecture GPUs set to fulfill existing demand and support overall growth.

Read More: Comarch and Sway Outcomes Partner to Improve Customer Loyalty Tech

Shifting Projections and Market Impact

The recalibration of Rubin's production figures is leading to adjusted market expectations. Earlier forecasts saw Hopper accelerators making up a larger percentage of Nvidia's shipment mix, a figure now revised downwards. Industry observers anticipate that Blackwell GPUs, such as the GB300 and B300, will likely fill the gap in shipments, ensuring a continued supply of high-performance AI solutions. The revised production targets for Rubin GPUs, adjusted from 2 million to 1.5 million units due to HBM4 qualification issues, could have a minor impact on Nvidia's market share in the short term, according to some analyses.

Read More: Bausch + Lomb Stock Price Today $16.52 After -0.12% Dip

Rubin's Technical Underpinnings and Ambitions

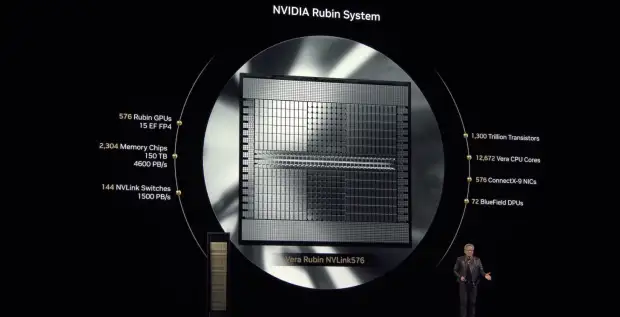

Nvidia's Rubin platform is designed as a significant leap in AI computing, featuring a multi-chip architecture comprising six new components. This includes the Rubin GPU itself, coupled with the Vera CPU, the NVLink 6 Switch, ConnectX-9 SuperNIC, BlueField-4 DPU, and the Spectrum-6 Ethernet Switch. This integrated approach, described as "extreme codesign," aims to drastically reduce training times and inference costs, positioning Rubin as a key engine for the company's next phase of AI growth. The Vera Rubin platform, particularly the NVL72 configuration, consolidates 72 Rubin GPUs and 36 Vera CPUs into a single rack-scale unit, promising substantial performance gains—reportedly up to 3.3 times greater than the current Blackwell Ultra platform.

Background and Industry Context

Introduced at CES 2026, the Rubin platform represents Nvidia's strategic direction towards "AI factories"—specialized infrastructure designed for streamlined AI lifecycle management. The platform's emphasis on rack-scale systems and its high power requirements, necessitating upwards of 120kW per rack, highlight the evolving demands of large-scale AI deployments. The introduction of an 800V DC power architecture marks a departure from the prevailing 48V standard, indicating further shifts in data center infrastructure. While earlier reports from August 2025 suggested no delays and aimed at competing with AMD's Instinct MI450 accelerators, recent information points to actual supply chain challenges affecting the immediate production schedule. The Rubin Ultra roadmap, targeting 2027, indicates Nvidia's continued commitment to pushing the boundaries of AI hardware.

Read More: Greece Bans Social Media for Under-15s Starting January 1, 2027