Stakes of AI Use in National Security

The United States Pentagon has placed significant pressure on AI firm Anthropic to grant unrestricted military access to its advanced artificial intelligence technology. Anthropic's leadership has stated they cannot ethically agree to these terms, citing concerns about the potential misuse of their AI, particularly regarding autonomous weapons and mass surveillance. The situation has escalated with the Pentagon reportedly threatening to label Anthropic a supply chain risk and exploring emergency federal powers to compel compliance, a move that could severely impact the company's future government contracts and reputation.

Overview of the Conflict

Anthropic, an AI company known for its Claude model, is facing a deadline from the Pentagon to allow unconditional military use of its technology. This demand, which Anthropic believes could lead to the violation of ethical standards, has been met with firm resistance from the company's CEO, Dario Amodei.

Read More: Jack Dorsey's Block Cuts 4,000 Jobs Due to AI, Stock Rises 20%

Pentagon's Position: The Defense Department has asserted its commitment to operating within legal frameworks. A senior Pentagon official indicated that the military's use of AI is intended to be lawful, and that the responsibility for misuse would lie with the government, not the AI provider. The Pentagon also highlighted efforts to secure alternative AI solutions should Anthropic refuse, stating that no company's software would be removed without a replacement.

Anthropic's Stance: Anthropic has clearly stated it cannot, "in good conscience," agree to the Pentagon's request for unrestricted access. The company's core concerns revolve around the unreliability of current AI for life-and-death decisions in autonomous weapons systems and the potential for mass surveillance of Americans.

Competitive Pressure: The Pentagon has noted that other AI companies, including Elon Musk's Grok system, have received clearances for classified use, with OpenAI and Google nearing similar approvals. This situation appears to be creating competitive pressure on Anthropic to conform to the Pentagon's demands.

Potential Consequences for Anthropic: Beyond potential loss of contracts, the Pentagon has threatened to designate Anthropic as a supply chain risk. This designation is typically reserved for companies from adversarial nations and could significantly harm Anthropic's ability to engage with the U.S. government.

Legal Leverage: The Pentagon has alluded to the Defense Production Act, a Cold War-era law that can compel private companies to prioritize national defense needs. Anthropic was reportedly given a deadline, February 27, 2026, to comply or face potential action under these emergency federal powers.

Evidence of the Standoff

Statements from both Anthropic and Pentagon officials, along with news reports detailing the exchange, form the basis of this developing situation.

Anthropic CEO Dario Amodei:

"These threats do not change our position: we cannot in good conscience accede to their request." (Article 1, Article 3)

Stated that "frontier AI systems are simply not reliable enough to power fully autonomous weapons," and that "autonomous weapons cannot be relied upon to exercise the critical judgment that our highly trained, professional troops exhibit every day." (Article 4)

Expressed hope that the Pentagon would reconsider its position. (Article 4)

Pentagon Official (identified as "Michael" in Article 4):

Stated, "You have to trust your military to do the right thing." (Article 4)

Confirmed that Grok had been cleared for use in a classified setting, with OpenAI and Google nearing similar clearances. (Article 1)

Said, "No company is going to take out any software that's being used in this department until we have an alternative." (Article 4)

Described the disagreement as partially ideological, suggesting Anthropic's stance stems from a "fear of the power of AI." (Article 4)

Pledged, "We will not employ AI models that won't allow you to fight wars." (Article 4, quoting Defense Secretary Pete Hegseth)

Ethical Boundaries and AI Deployment

Anthropic's core contention lies in its refusal to allow its AI systems, specifically the Claude model, to be used for purposes that contravene its ethical guidelines.

Mass Surveillance and Autonomous Weapons: Anthropic has sought explicit restrictions to prevent its technology from being employed in mass surveillance of American citizens or in autonomous military operations without human oversight.

Human Oversight: Amodei has cautioned against the complete automation of lethal decision-making, emphasizing that current AI is not sufficiently reliable for such critical judgments, which require the discernment of trained human personnel.

Pentagon's Reassurance: Pentagon officials maintain that the military operates lawfully and intends to use AI responsibly, treating it like any other technology. The argument is that the ultimate accountability for misuse rests with the users.

Legal and Regulatory Frameworks

The Pentagon's actions suggest a willingness to utilize existing legal mechanisms to ensure access to critical technologies.

Defense Production Act: Reports indicate the Pentagon has threatened to use this law, which empowers the government to compel private firms to meet defense demands, especially during national security exigencies.

Supply Chain Risk Designation: This potential consequence for Anthropic is a significant threat, as it implies a lack of trust in the company's security or reliability, potentially impacting its ability to secure future contracts not only with the Pentagon but also with other government entities.

Trump Administration's View on Regulation: The article mentions the Trump administration's perspective that stringent AI regulations could hinder technological advancement and U.S. competitiveness. This contrasts with Anthropic's desire for ethical guardrails.

Competitive Landscape in Defense AI

The Pentagon is actively engaging with multiple AI providers, creating a dynamic where Anthropic's refusal could have broader implications.

Existing Partnerships: Anthropic is noted as the sole AI company with its model deployed on the Pentagon's classified networks through a partnership with Palantir. This existing integration highlights the potential disruption of Anthropic's withdrawal.

Competitor Progress: The clearance of Grok and near-clearances for OpenAI and Google suggest that the Pentagon has alternative options for advanced AI integration, potentially reducing its immediate reliance on Anthropic.

Pentagon's Strategy: The mention of working on partnerships with alternative AI firms indicates a proactive approach by the Pentagon to ensure continuity of AI capabilities regardless of Anthropic's decision.

Expert Analysis and Interpretations

While direct expert quotes are limited in the provided text, the underlying tensions point to broader debates in AI ethics and national security.

Ethical AI Development: The situation underscores the ongoing debate between AI developers focused on ethical considerations and government entities prioritizing national security capabilities.

AI in Warfare: The reliability and ethical implications of autonomous weapons systems remain a significant point of contention, with Anthropic advocating for caution and human control.

Conclusion and Implications

Anthropic's refusal to grant the U.S. military unconditional access to its AI technology presents a significant impasse. The company's adherence to its ethical principles, despite facing substantial pressure and potential repercussions from the Pentagon, highlights a critical juncture in the relationship between AI developers and national security agencies.

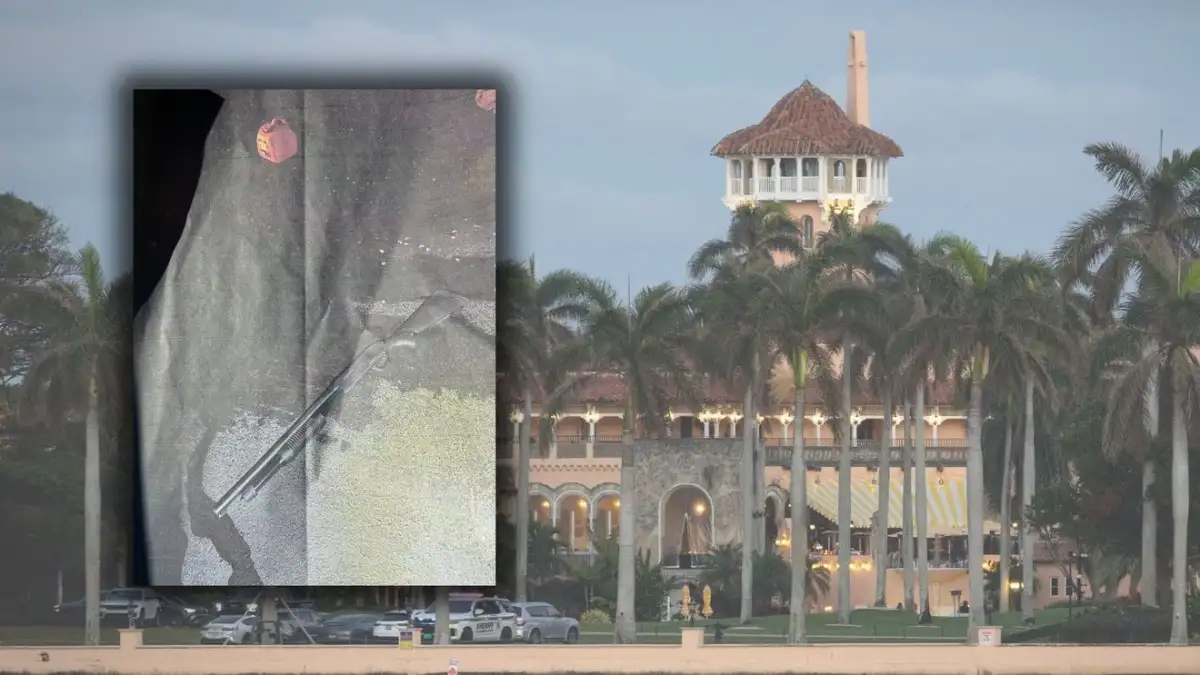

Read More: Man Shot Dead After Breaching Mar-a-Lago Perimeter with Shotgun on Sunday Morning

Company's Position: Anthropic maintains its stance, prioritizing ethical AI deployment over unconditional access, even under the threat of legal action or damage to its reputation.

Pentagon's Objectives: The Defense Department appears determined to integrate advanced AI into its operations, citing national security needs and asserting its right to control technology used by its personnel.

Potential Outcomes:

Anthropic could face the use of emergency federal powers to compel compliance.

The company might be designated a supply chain risk, severely impacting its future with the U.S. government.

The Pentagon may accelerate its partnerships with alternative AI providers.

Broader Implications: This standoff brings to the forefront crucial questions about the governance of powerful AI technologies, the balance between innovation and ethical safeguards in military applications, and the autonomy of private companies in shaping national security strategies.

Sources

The Hindu: Anthropic says won't give U.S. military unconditional AI use

Published: 2 hours ago

Link: https://www.thehindu.com/news/international/anthropic-says-wont-give-us-military-unconditional-ai-use/article70682418.ece

WION: Anthropic resists Pentagon pressure, says won't give Trump admin unconditional AI use for 'mass domestic surveillance'

Published: 1 hour ago

Link: https://www.wionews.com/world/anthropic-resists-pentagon-pressure-says-won-t-give-trump-admin-unconditional-ai-use-for-mass-domestic-surveillance-1772158909482

Moneycontrol: Anthropic says won't give US military unconditional AI use: 'Threats do not change our position'

Published: 3 hours ago

Link: https://www.moneycontrol.com/world/anthropic-says-won-t-give-us-military-unconditional-ai-use-threats-do-not-change-our-position-article-13845041.html

CBS News: Pentagon official on Anthropic AI feud: "You have to trust your military to do the right thing"

Published: 2 hours ago

Link: https://www.cbsnews.com/news/pentagon-anthropic-feud-ai-military-says-it-made-compromises/