Nvidia is developing a specialized chip system, the "Vera Rubin Space-1," for data centers in orbit, signaling a move to leverage space for advanced artificial intelligence computations. This initiative is part of a broader trend among tech giants to explore the potential of off-world computing, driven by the prospect of abundant solar energy and vast operational space. The company faces ongoing engineering challenges in realizing these ambitious plans.

While specific timelines for widespread deployment remain nebulous, the push towards 'orbital AI' is gaining momentum. Companies like Google, through its 'Project Suncatcher,' are investigating similar concepts. Suncatcher, a research program, aims to validate the efficacy of solar-powered satellite constellations in Low Earth Orbit (LEO). These satellites, equipped with Tensor Processing Units (TPUs), would be interconnected via laser links, processing 'latency-tolerant tasks' using the near-limitless solar energy available beyond Earth's atmosphere. A significant focus of this project is the thermal management of these in-space computing systems.

Read More: Corsair DDR5 Sale Offers 25% Off Until March 26, 2026, Amid Price Hikes

Implications for Scientific and Physical AI

Nvidia's broader engagement with artificial intelligence infrastructure, particularly through partnerships like the one with the National Science Foundation (NSF) for the Open Models for AI (OMAI) initiative, suggests a strategic interest in accessible AI development. OMAI's commitment to releasing models, training data, and code openly contrasts with the proprietary nature of many cloud-based AI services. This open-source approach could potentially stimulate demand for 'on-prem' or localized AI infrastructure, challenging the dominance of major cloud providers. Furthermore, the expansion of AI, especially in scientific contexts, is expected to drive demand for specialized skills and investment in public-private AI infrastructure.

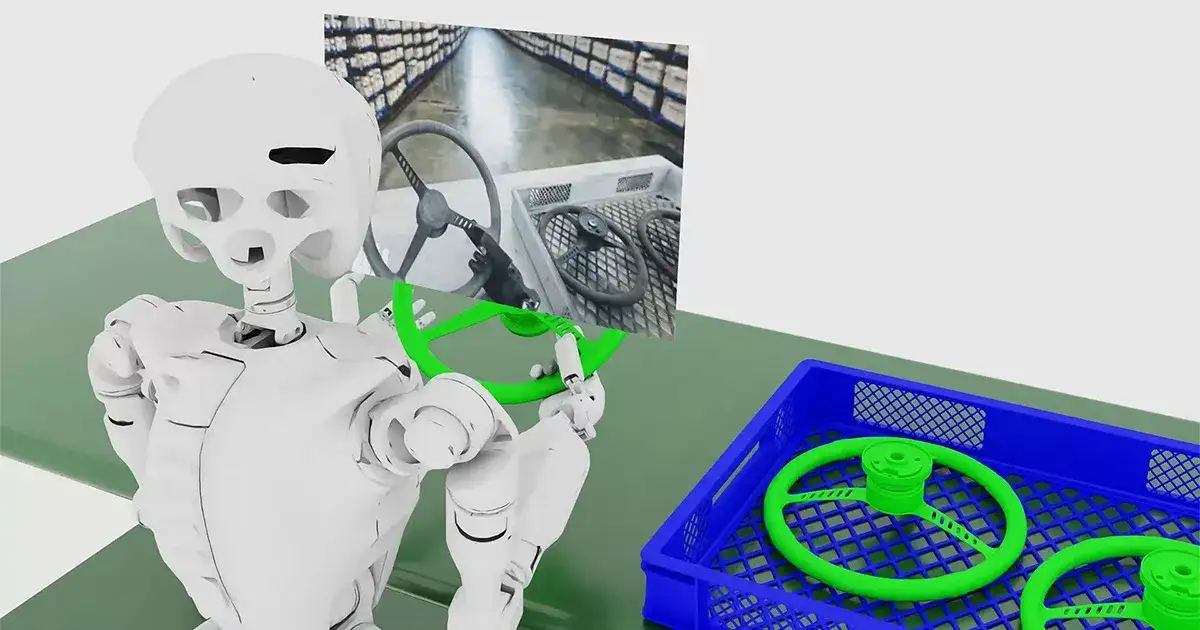

The company's 'Cosmos' platform further illustrates this direction, offering 'world foundation models' designed for physical AI tasks across sectors like robotics, autonomous vehicles, and vision AI. Cosmos, integrated with Nvidia's Omniverse simulation environment, provides tools for video data generation and the training of physical AI models, aiming to accelerate development for industrial applications. Nvidia highlights the performance potential of these models on their latest hardware, such as the Blackwell GB200, for 'post-training and inference workloads.'

Read More: Artemis 2 mission April 1st sends astronauts around Moon

The Expanding Domain of AI in Space and National Labs

The development of space-based AI infrastructure aligns with broader efforts by government agencies and national laboratories to harness AI for scientific advancement and national security. NASA, for instance, is actively exploring AI applications, from making Earth-observing satellites 'smarter' to advancing space exploration. Its activities span various AI/ML-focused seminars and lectures, indicating a strategic integration of AI into its research and operations.

Similarly, national laboratories like Los Alamos National Laboratory (LANL) are investing heavily in high-performance computing and AI. LANL has partnered with HPE and Nvidia to deploy new supercomputing systems and is running advanced AI models on Nvidia's Grace Hopper GPUs. This infrastructure is intended to accelerate research in areas relevant to national security and fundamental science, including the search for neutrinos and the development of AI computing for missions like 'Genesis.' The convergence of interests from private industry and public institutions points towards a growing reliance on sophisticated AI capabilities, both terrestrially and in the emerging domain of space.

Read More: Dyack says NVIDIA DLSS 5 reveal too early, may hurt AAA games