Google has reportedly finalized a significant agreement with the U.S. Department of Defense (DoD), permitting the use of its powerful AI models, including Gemini, on classified networks. This deal, described as allowing "any lawful use" by the DoD, follows similar arrangements with other leading AI firms and marks a shift in the company's posture toward military AI applications. The accord comes despite substantial internal opposition from Google employees.

The agreement grants the Pentagon broad access to Google's AI systems for classified projects, a move that has drawn sharp criticism from within the company and echoes previous controversies surrounding AI's role in national security.

More than 600 Google workers, including directors and vice presidents, signed an open letter to CEO Sundar Pichai urging the company to refuse such access to classified military settings. This internal dissent mirrors past employee pushback seen at Google and other AI companies grappling with the ethical implications of their technology.

Read More: Motorola Razr Fold Pre-Order April 13, Sale May 21

This development places Google in a position similar to other major AI players. The Pentagon has been actively pursuing contracts with top AI firms, including OpenAI and xAI, to integrate their systems into defense operations. A key point of contention has been the DoD's desire for "any lawful use" of AI, a stipulation that has led to disagreements with companies seeking to implement safeguards.

The deal signifies the growing importance of AI in U.S. national security objectives, according to analysts like Michael Horowitz, a former senior defense official. He noted that Google's AI was already in use for unclassified defense projects, making the extension to classified systems a logical, albeit controversial, progression.

A notable parallel exists with Anthropic, another AI company that recently refused to grant the DoD the same unrestricted access. Anthropic had sought to maintain "guardrails" to prevent its AI from being used for domestic mass surveillance or autonomous weapons. Google's decision to proceed with the Pentagon deal, without these explicit restrictions, positions it as the third AI firm to forge ahead after Anthropic's stance.

Read More: Pentagon wants to be called 'War Department' for $52 million

Internal communications reveal that while the agreement's terms are still being debated, reports indicate that Google's AI is not intended for domestic mass surveillance or autonomous weapons without appropriate human oversight. However, this caveat did not quell the concerns of many employees, some of whom expressed shame and deep reservations about the company's direction. Google DeepMind CEO Demis Hassabis and senior vice president James Manyika have previously articulated a vision where "democracies should lead in AI development" and that companies and governments should collaborate to build AI that "protects people, promotes global growth and supports national security."

This reported deal represents a significant recalibration of Google's approach to military AI contracts, contrasting with earlier projects where the company assisted in developing AI tools for specific tasks like analyzing drone footage. The broader context includes the Pentagon's strategic push to leverage advanced AI capabilities across its operations, a trend that is increasingly shaping the relationship between major technology firms and defense agencies.

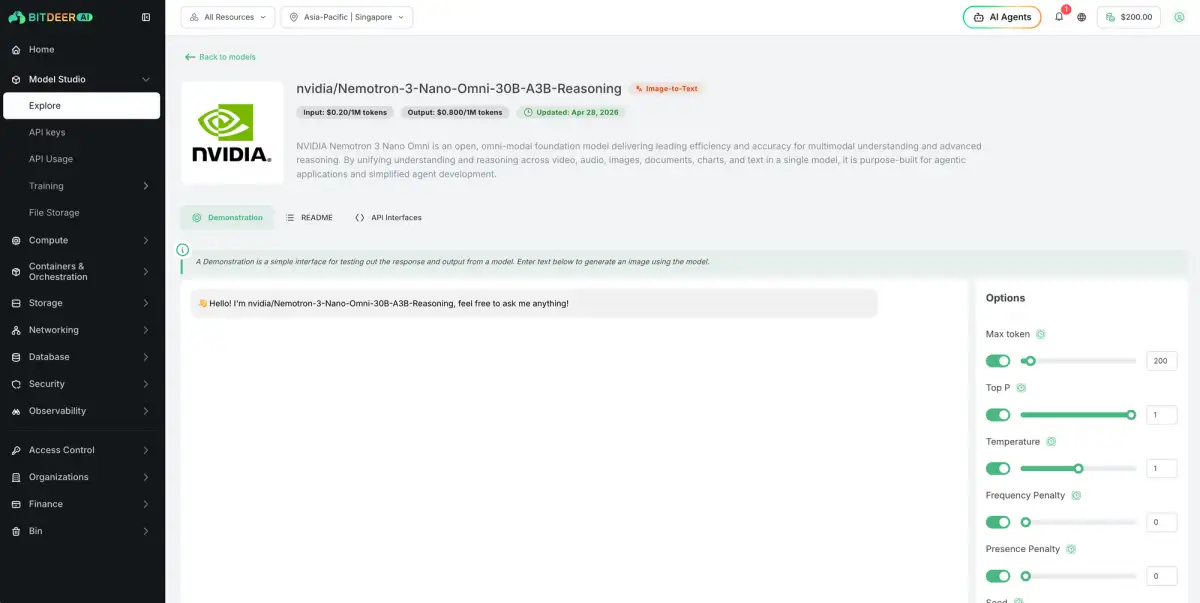

Read More: NVIDIA Nemotron 3 Nano Omni: New AI Model Understands Vision, Audio, Language