ADLINK is pushing the latest NVIDIA Blackwell architecture into the cramped, often filthy world of edge computing. By shifting this silicon into MXM (Mobile PCI Express Module) formats, the company is attempting to move massive AI inferencing away from clean data centers and onto the vibration-heavy floors of factories and the sterile, tight corners of medical imaging rooms.

The transition to Blackwell-based MXM modules signals a refusal to rely on cloud latency, choosing instead to bolt heavy-duty math directly onto the machines that need it.

The Physicality of the Edge

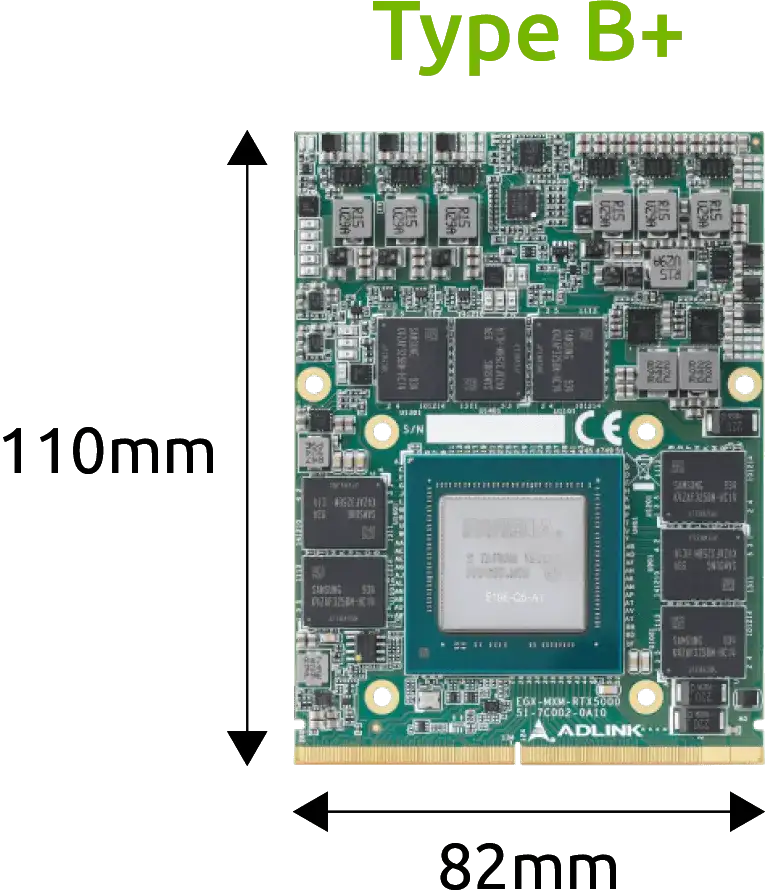

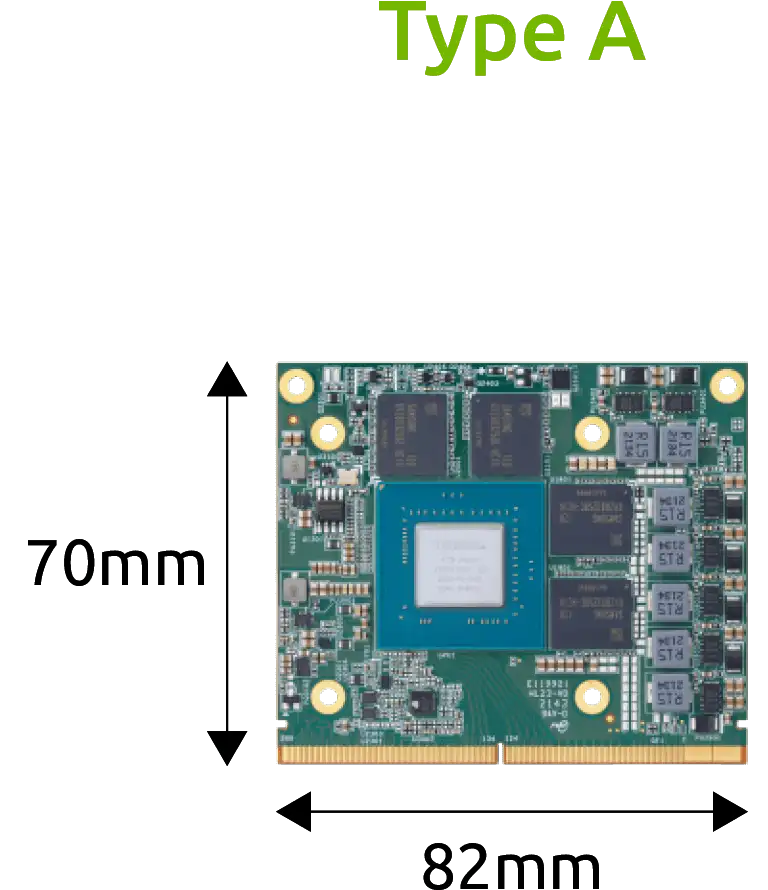

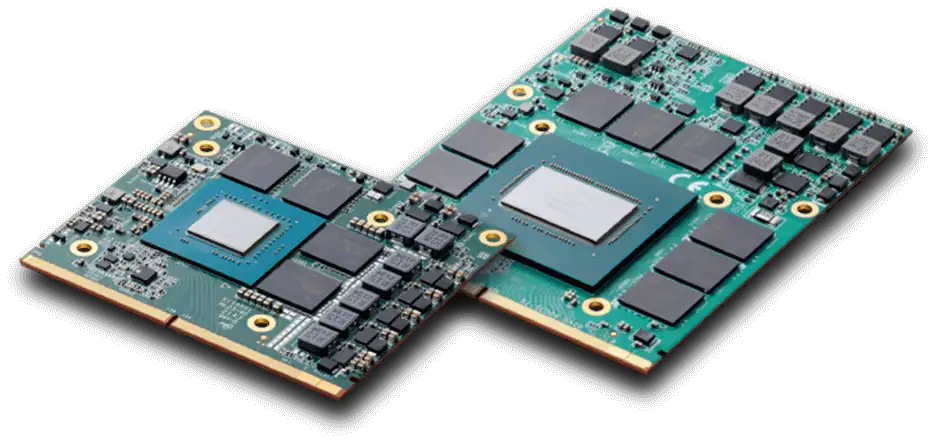

The move targets a specific problem: standard PC hardware is too big and too fragile for the places where work actually happens. The MXM form factor acts as a dense, swappable heart for computers that cannot afford the bulk of traditional graphics cards.

PCIe 4.0 Connectivity: Provides the raw bandwidth needed for real-time video processing.

Thermal Management: Designed for "space-constrained" boxes where air doesn't move easily.

Ruggedization: Hardened against the shocks and tremors common in Smart Manufacturing.

Reduced Latency: By keeping data processing inside the local frame, I/O lag is minimized.

| Architecture | Model | Status | Target Use |

|---|---|---|---|

| Blackwell | New Release | Active | Edge AI / Deep Learning |

| Ada Lovelace | RTX 5000 / 3500 | Active | High-end Visualization |

| Ampere | RTX A4500 / A2000 | Active | Embedded Compute |

| Pascal | P5000 / P3000 | End of Life | Legacy Industrial |

The Lifecycle Grind

While the marketing highlights the "long-term support" of industrial-grade hardware, the internal records show a rapid churn. The list of End of Life (EOL) modules—including the once-sturdy Quadro P5000 and RTX 5000 series—serves as a reminder that even "rugged" silicon has a short social life before it is replaced by the next power-hungry iteration.

Read More: AirTrunk Invests MYR12 Billion in New Johor Data Centres

The Blackwell Shift

The integration of Blackwell is not just about raw speed; it is about the density of logic. As industrial AI moves from simple "see a box" tasks to "predict the machine failure," the math becomes heavier.

Customization: ADLINK is pitching flexible builds where the thermal sink and power draw are tweaked for specific "clunky" machines.

NVIDIA IGX Orin Integration: The modules are designed to slot into larger AI platforms like IGX and Orin, creating a tiered hierarchy of compute power from the module level up to the full rack.

Global Support: Engineering teams are being stationed locally to keep these systems from failing in 24/7 environments where downtime is expensive.

Background: ADLINK has historically focused on the bridge between raw silicon (NVIDIA, Intel) and the unpolished reality of the industrial world. Their MXM line bridges the gap between consumer-grade laptops and high-end server towers, providing a middle ground for machines that must be small but cannot be weak.