Microsoft's recent engagements reveal a determined push into sophisticated AI development, focusing on scalable agent societies, optimizing large language models (LLMs), and exploring novel quantization techniques. The company is showcasing advancements in creating complex, interacting AI agents and refining the efficiency and reasoning capabilities of its models. This effort spans fundamental research, open-source contributions, and practical application, painting a picture of a multifaceted AI strategy.

The Microsoft Research Forum, a recurring virtual event, has been a platform for highlighting these endeavors. Sessions have delved into topics like "Magentic Marketplace: Testing societies of agents at scale," demonstrating an interest in emergent behaviors within multi-agent systems. Further, discussions on teaching "small language models to think like optimization experts with OptiMind" and exploring "a new lens on matrix optimization for LLMs" with ARO point to a significant focus on enhancing model performance and efficiency. Research into shrinking matrix sizes with "Dion2" also signals an effort to make models more accessible and less computationally demanding.

Read More: MIT Boron-Oxygen Molecule Acts as Builder in Chemical Reactions

This drive towards more capable and efficient AI is further evidenced by their open-source contributions. The microsoft/LMOps GitHub repository outlines a range of techniques for improving LLMs, including automatic prompt optimization, longer context windows, and alignment via LLM feedback. Key areas of exploration include "In-Context Demonstration Selection," "Instruction Tuning using Feedback from Large Language Models," and methods for "Lossless Acceleration of LLMs," all aimed at maximizing LLM utility. Papers released in late 2023 and early 2024 underscore this persistent investigation into core LLM functionalities and performance enhancements.

On the hardware and model architecture front, Microsoft has introduced BitNet, a model featuring "native 1.58-bit weights and 8-bit activations (W1.58A8)." This approach to quantization is presented as a method for achieving efficient inference, with various model weight formats available for different deployment needs. The development of BitNet signifies a tangible effort to reduce the computational footprint of advanced AI models.

Read More: Anthropic Refuses China Access to Latest AI Models

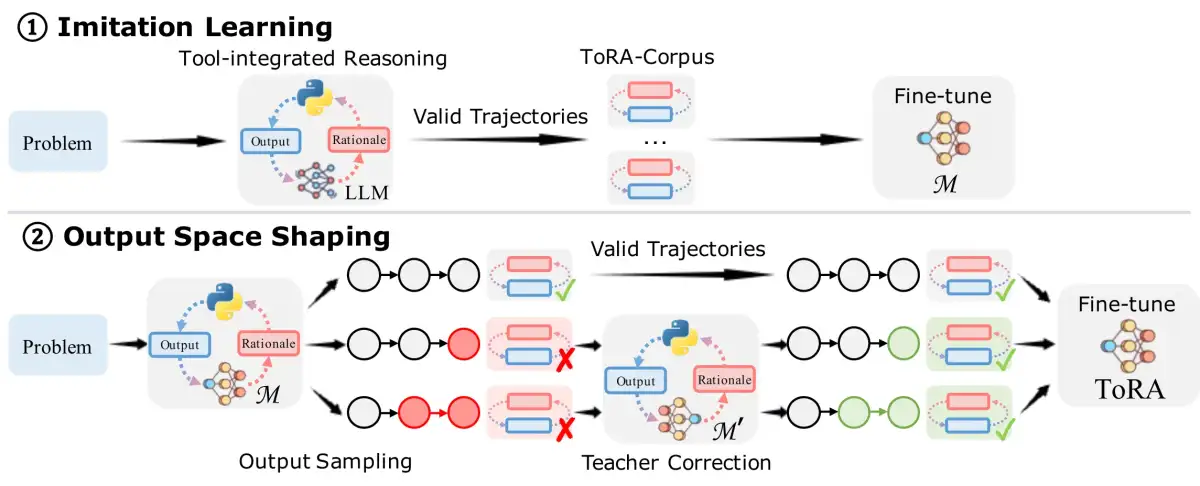

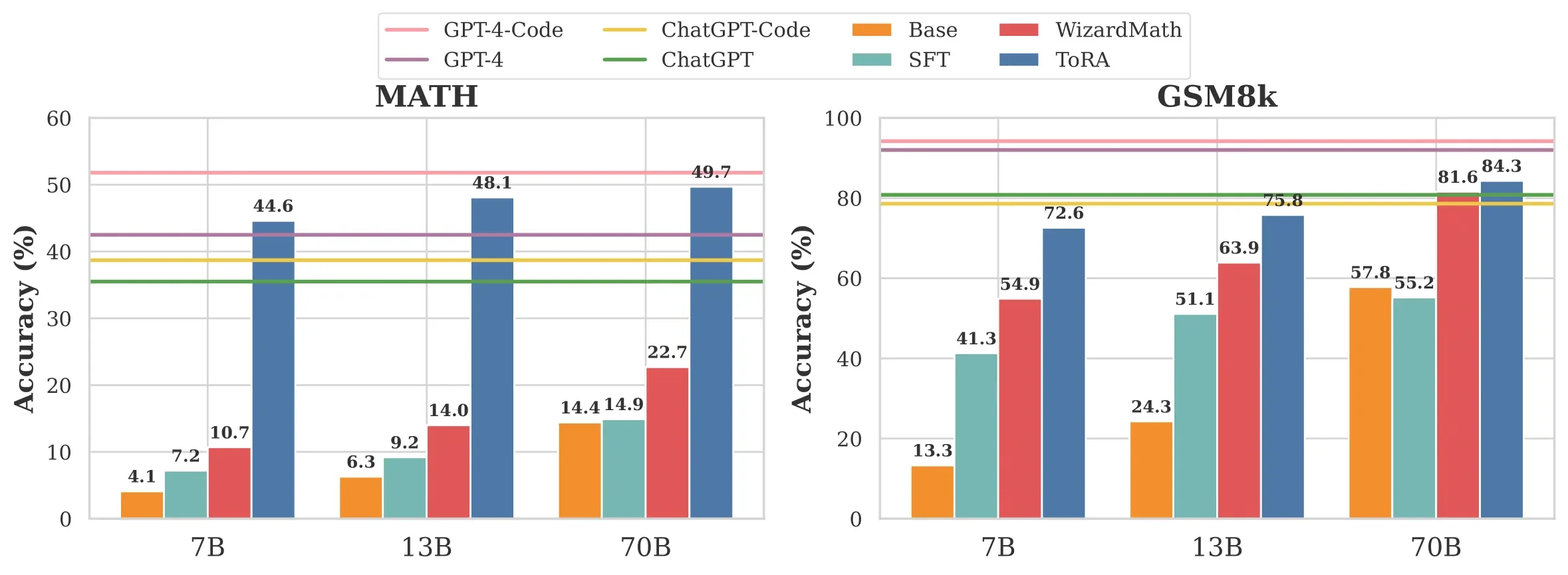

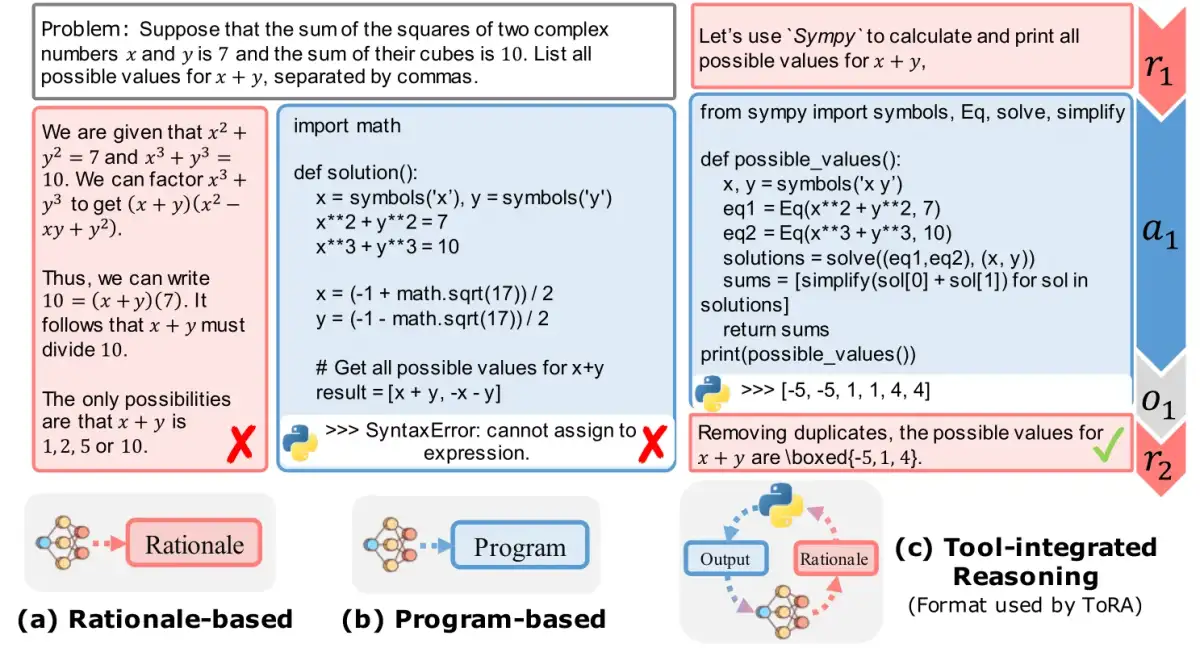

Beyond model optimization, Microsoft is also actively developing tool-integrated reasoning agents. The "ToRA" project, detailed on GitHub, focuses on enabling LLM agents to tackle complex mathematical reasoning problems by interacting with external tools. The project provides performance metrics on various mathematical tasks, indicating a benchmark for agentic problem-solving capabilities.

The overarching sentiment from Microsoft leadership suggests a rapid, almost overwhelming pace of AI advancement. Peter Lee, President of Microsoft Research, noted, "Today we are seeing so much AI research happening at the speed of conversation… we’ve made tremendous progress" through openness and access. This suggests a strategy that balances internal research with external collaboration and public release of findings and tools, aiming to both advance the field and leverage its momentum.

The broader context of Microsoft's AI work includes repositories like microsoft/AI, which catalogs efforts in deploying machine learning models on platforms like Kubernetes and Azure ML for both real-time and batch scoring. These efforts encompass classic ML, deep learning, computer vision, and natural language processing, indicating a comprehensive approach to integrating AI across various domains. The company's presence at events like CHI 2026 further suggests a commitment to exploring the human-computer interaction aspects of these evolving AI technologies.