The notion of running sophisticated 'large language models' (LLMs) directly within consumer video games, enabling novel interactions and NPC behavior, faces substantial hurdles. While the capability to run smaller AI models locally is becoming more accessible, integrating these models seamlessly into game environments remains a complex engineering challenge, with no immediate widespread deployment in sight.

The primary bottleneck isn't the availability of AI models that can operate on personal hardware, but rather the technical expertise and infrastructure required to package and run them reliably within existing game development pipelines. Developers grapple with how to bundle these models, ensure they function across diverse user systems, and manage their resource demands without compromising gameplay performance. This is compounded by the inherent variability in model architectures and their specific configuration needs.

Hardware Readiness Signals an Emerging Capability

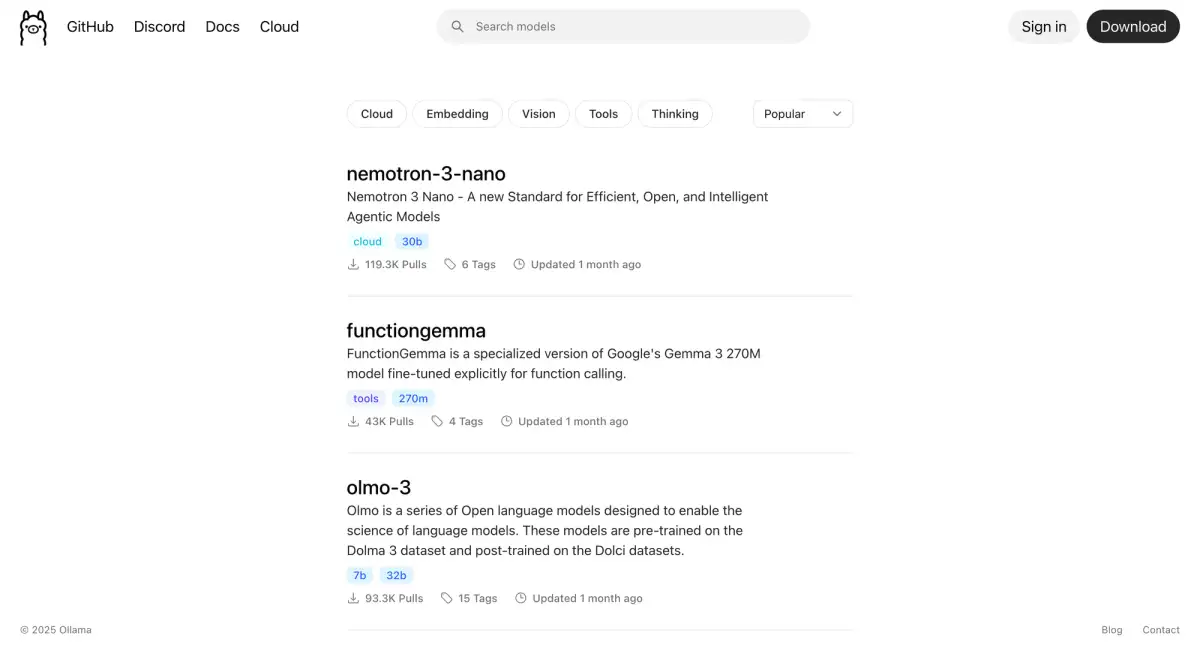

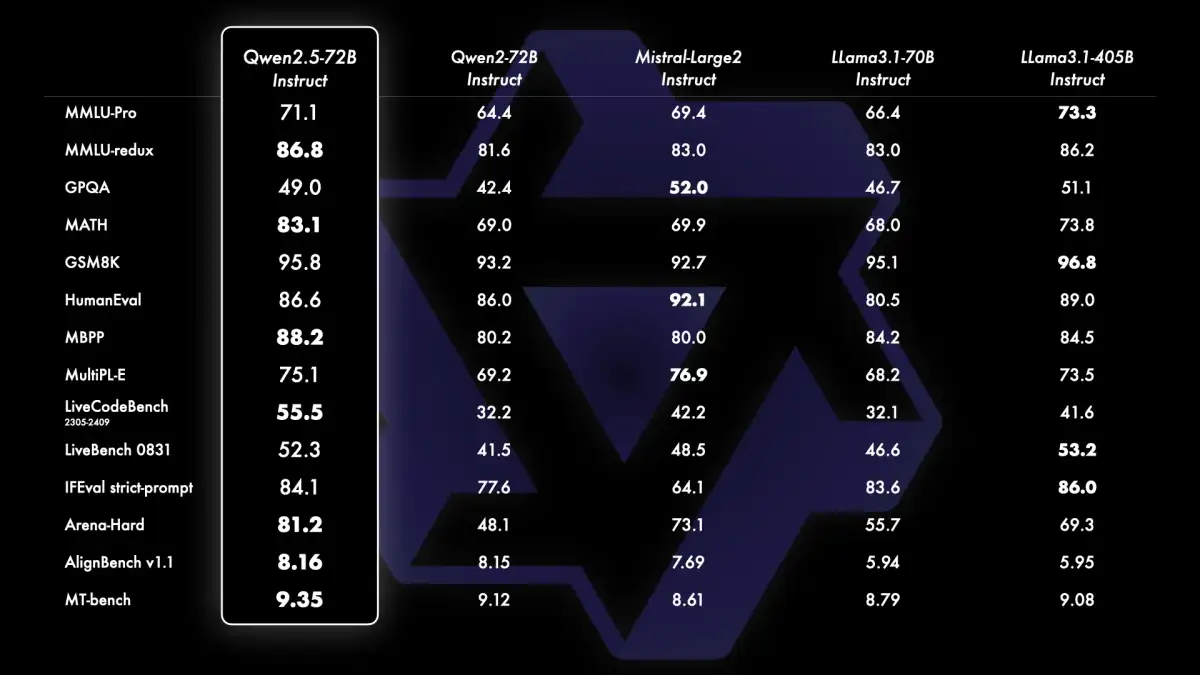

The technical groundwork for running AI models on personal computers is steadily solidifying. Guides detailing GPU setup for local AI model execution are emerging, suggesting that hardware capable of handling models in the 7B to 13B parameter range is becoming commonplace.

Read More: OpenAI Delays Adult Mode Plans to Focus on Better AI for All Users

Larger models, such as those in the 70B parameter class, are becoming manageable through techniques like quantization, requiring approximately 24GB of VRAM.

Crucially, running models locally means user data and prompts remain on their own machines, a significant privacy advantage.

Furthermore, local execution bypasses rate-limiting issues often associated with cloud-based AI services, allowing for uninterrupted and high-volume interactions.

The Software and Integration Conundrum

Despite hardware improvements, the path to integrating these models into games is far from straightforward. The challenge extends beyond simply having the processing power.

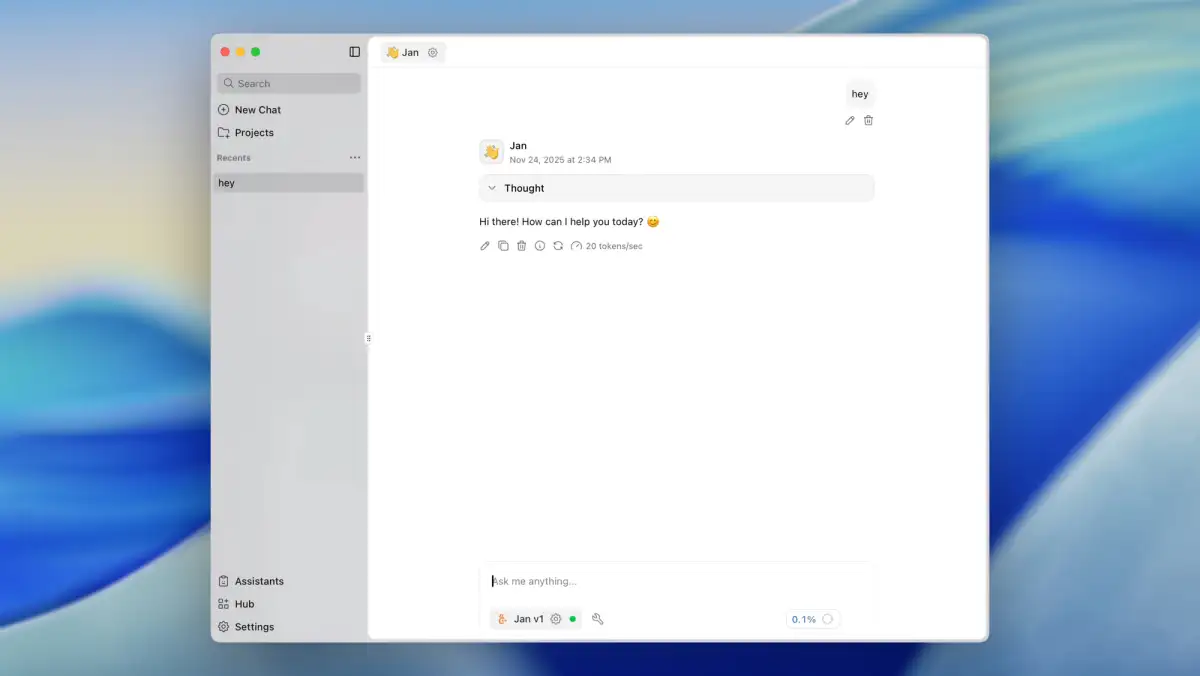

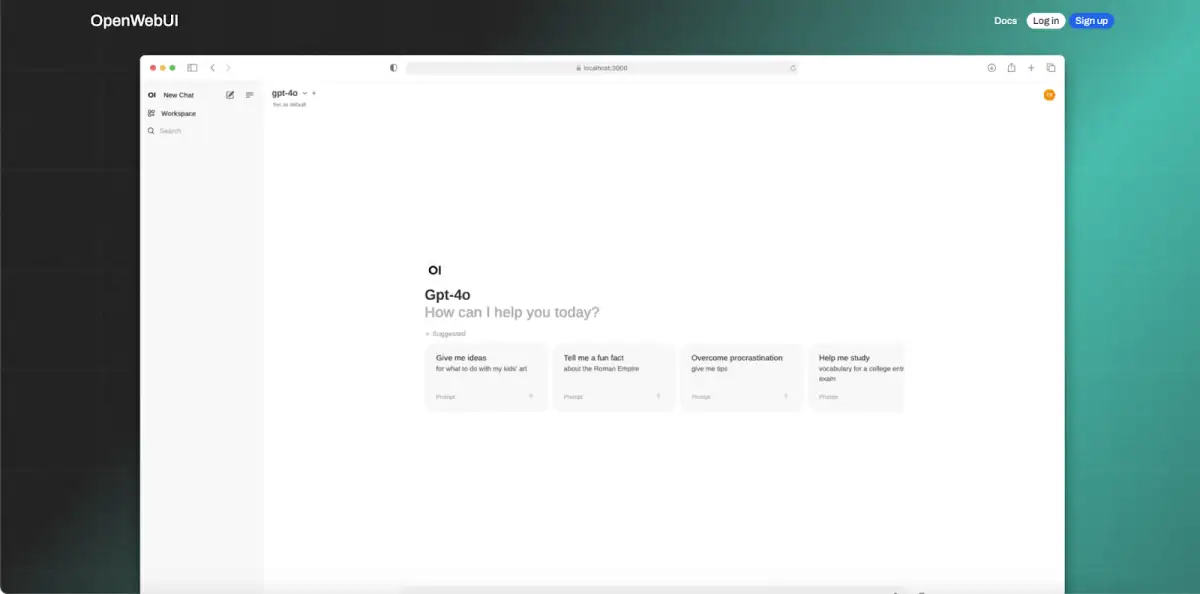

Developers need robust software solutions to interface with these local AI models. Tools and frameworks are being developed to facilitate this, but they are often experimental and require significant customization.

The "packaging" of models is a key concern, as highlighted in discussions among developers. Reliably embedding these AI components into game executables or their dependencies, ensuring cross-platform compatibility and consistent performance, is a substantial undertaking.

Furthermore, the specific requirements of different AI models, including their unique tokenization schemes and fine-tuning for tasks like 'Fill-in-the-Middle' (FIM), add layers of complexity to the development process.

A Gradual Evolution, Not an Overnight Shift

While the excitement surrounding AI's potential in gaming is palpable, the reality is that widespread adoption of local LLMs will likely be a gradual evolution rather than an abrupt revolution.

Early implementations might be experimental, perhaps limited to specific modes or indie titles that can absorb the development overhead.

Larger, more established game studios will likely adopt these technologies more cautiously, prioritizing stability and a polished user experience.

The availability of smaller, highly optimized models specifically designed for game integration could accelerate this process.

Background and Context

Discussions around running AI models locally have gained traction as advancements in machine learning hardware and model optimization techniques continue. Publications and online forums dedicated to AI and computing have become venues for exploring these possibilities. The evolution of graphics processing units (GPUs) from mere gaming hardware to powerful parallel processors capable of handling complex AI computations is a fundamental shift underpinning this trend. Simultaneously, research into more efficient AI architectures and inference techniques is making it feasible to run sophisticated models on less powerful hardware than previously imagined. The integration of AI into interactive media, particularly video games, has long been a theoretical pursuit, with LLMs representing a significant leap in the potential for dynamic and responsive game worlds.

Read More: Microsoft's Project Helix Xbox to Play PC Games in 2027