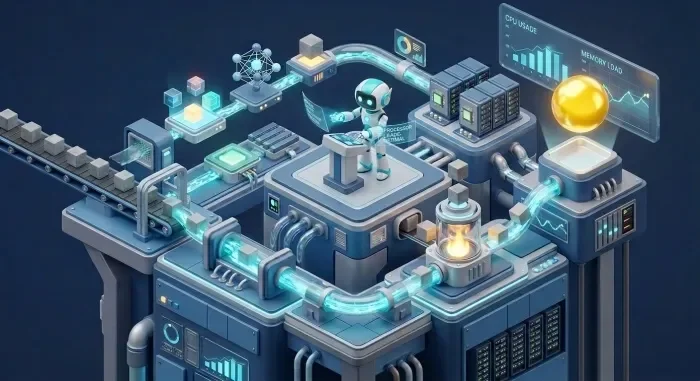

A new approach to knowledge management, dubbed the "LLM Wiki," is gaining traction, promising automated, AI-maintained markdown knowledge bases. This framework, popularized by Andrej Karpathy, leverages large language models to transform disparate sources into a structured, queryable resource. The core idea involves an immutable layer for raw data, an AI-generated layer for wiki pages, and a schema file to guide the AI. Users interact by consuming the output rather than manually curating it.

The fundamental concept revolves around an AI that generates and maintains wiki pages from user-provided sources. A central wiki/index.md file acts as a master catalog for content. The process typically involves an ingest command that processes new sources, automatically refreshing a graph layer when present. This self-maintaining aspect suggests a reduction in manual curation effort.

Read More: Cerebras IPO: $95 Billion Valuation on Nasdaq

Technical Underpinnings and Augmentations

The framework is being integrated into various tools and platforms. A Claude Code plugin enables the LLM Wiki pattern within projects, with commands for installation and ingestion. This plugin, once installed, allows the wiki to reside within a project's working directory, independent of the plugin itself. The implementation details suggest specific script locations for managing these functions.

Further development is focusing on enhancing the LLM Wiki's capabilities through knowledge graphs. Tools like InfraNodus are being used to transform the wiki into a network of ideas, aiming to steer the AI's reasoning across an ontology graph. This integration addresses perceived shortcomings in the original framework, such as a lack of "self-awareness and evolution." By incorporating knowledge graphs, the goal is to create a more "self-evolving research system."

User Adoption and Ecosystem

Beyond code plugins, the LLM Wiki is being adapted for personal knowledge management systems. Efforts include building "AI-powered second brains" using applications like Obsidian, where the LLM handles the organizational tasks. This user-driven approach emphasizes that once information is saved, it is not manually edited, allowing the wiki to grow and reveal connections organically.

Read More: AI Chatbots Mimic Consciousness, Experts Say They Aren't Real

The ecosystem also includes extensions and refinements to the core concept. One proposed solution, Penfield, offers persistent memory and a knowledge graph for AI agents, aiming for consistent relationships across different tools. This includes native support for specific relationship types, enabling an agent to manage a vault with minimal user intervention. Periodic "health checks" of the wiki by the AI are also part of the maintenance cycle, with suggestions for human participation to improve the overall quality.

Origins and Context

The LLM Wiki concept was introduced by Andrej Karpathy in early April 2026. The framework is designed to streamline the conversion of notes, papers, and data into a structured, queryable knowledge base. This approach stands in contrast to traditional manual curation methods, positing that an LLM can effectively manage the complexities of a growing knowledge repository. The basic architecture involves organizing data into distinct directories: one for immutable raw sources, another for AI-generated wiki content, and a schema file (often named CLAUDE.md) that dictates how the AI should operate. The user's role shifts from editor to consumer, interacting with the AI-generated output for answers.

Read More: Apple M4 Pro and Max MacBook Pro Specs in May 2026 Buying Guide