Local execution of large language models (LLMs), coupled with speech processing tools, is emerging as a viable alternative to cloud-based AI services, utilizing previously gaming-centric GPU hardware. This trend is driven by a desire for enhanced privacy, cost-effectiveness, and greater control over AI deployments. The setup often involves running speech-to-text (STT) and text-to-speech (TTS) engines, along with the LLM itself, directly on consumer-grade hardware.

VOICE AND TEXT INTERACTION CHAINS

The process typically involves a user speaking, a model transcribing that speech, the LLM processing the text, and then a TTS engine vocalizing the LLM's response. Some approaches streamline this by employing a single speech-to-speech model, circumventing the need for distinct STT and TTS components in a chain. Whisper, a prominent open-source model, is frequently cited for its capability in handling real-time transcription tasks.

OPTIMIZING MODELS FOR CONSUMER HARDWARE

To accommodate the significant resource demands of LLMs, especially larger parameter models, techniques like quantization are crucial. Frameworks and libraries such as llama.cpp, Ollama, HuggingFace Transformers, and vLLM are employed.

Read More: MIT Boron-Oxygen Molecule Acts as Builder in Chemical Reactions

Quantization, including 4-bit and 8-bit options, allows larger models to run on hardware with limited VRAM, such as 16GB GPUs. This involves compressing model weights with minimal performance impact.

ONNX (Open Neural Network Exchange) format and TensorRT optimization are used to convert models for efficient inference on NVIDIA GPUs.

Specific models, like those from the Qwen series (e.g., Qwen2.5-72B-Instruct-AWQ), are downloaded and served, sometimes requiring careful handling of large file sizes via tools like

git-lfs.

HARDWARE CONSIDERATIONS AND ADVANCED USES

Running AI locally necessitates careful hardware planning.

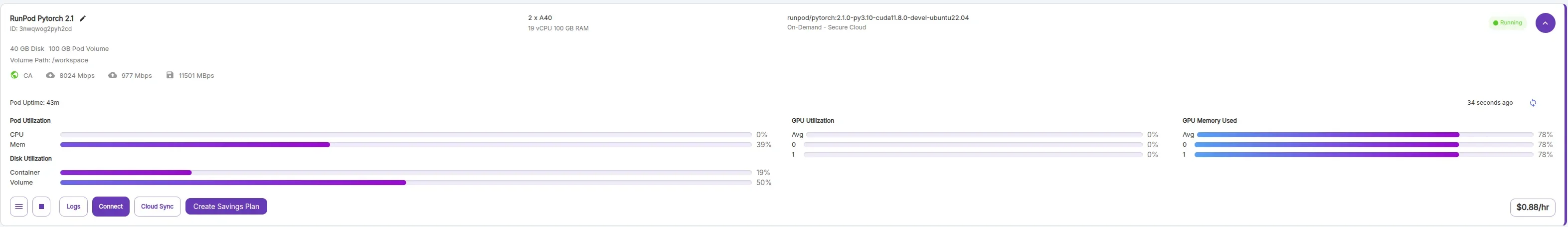

GPU Memory (VRAM) is a primary bottleneck. Larger models, particularly those exceeding 70 billion parameters, often require substantial VRAM, sometimes exceeding what single GPUs offer. Solutions include using multiple GPUs for parallel serving or opting for heavily quantized models.

CPU vs. GPU allocation can be strategic; for instance, running TTS on the CPU while offloading STT to the GPU is one configuration.

Beyond basic chat interactions, potential applications include building custom voice-cloning pipelines, training domain-specific models for tasks like troubleshooting, and integrating AI into home automation systems for a "smart home" experience.

BACKGROUND

The shift towards local LLM execution follows a broader trend of reclaiming computational tasks from cloud providers. Historically, powerful GPUs were primarily associated with PC gaming. However, with the proliferation of advanced AI models and the increasing accessibility of frameworks designed for local deployment, these graphics cards are finding new utility in personal AI labs. This evolution points to a future where sophisticated AI capabilities are not necessarily tethered to remote servers, fostering a more decentralized and personalized AI ecosystem. The emphasis on privacy-focused and cost-effective solutions underscores the growing demand for user-controlled AI.