The pursuit of generative AI performance is currently undergoing a structural shift. Engineering teams are finding that the most efficient way to boost accuracy and reduce costs is not through the procurement of larger, more expensive language models, but through system architecture.

Core Insight: Intelligent systems rely on constrained model invocation. Current engineering consensus suggests that only a fraction—approximately two of every four agents—should require direct interaction with an LLM.

Structural Priorities

The prevailing methodology for production-grade AI involves moving away from raw model upgrades toward:

Contextual Grounding: Prompts must be anchored in reliable, current data to prevent hallucination.

Operational Efficiency: Systems are increasingly built upon foundational cloud services—such as Amazon Bedrock, AWS Lambda, and DynamoDB—to handle enterprise-specific requirements like compliance, security, and SSO.

Architectural Modularity: Developers are moving toward 'composability,' treating models as interchangeable components within a broader workflow rather than as a singular source of truth.

Emerging Patterns in Production

Data from over 500 documented industrial case studies reveals that organizations are iterating through specific architectural patterns based on their complexity needs:

| Pattern | Objective | Common Use Case |

|---|---|---|

| Direct Integration | Minimal latency | Basic copilots |

| RAG | Domain-specific accuracy | Industry classification, Search |

| Multi-Agent Systems | Complex reasoning | Automated problem solving |

| Human-in-the-Loop | Risk mitigation | Content moderation |

The Evolution of Design

The timeline of implementation demonstrates a shift from basic retrieval to advanced cognition. Following the initial RAG surge of early 2023, firms moved toward fine-tuning, and eventually, the deployment of Agentic Swarms. These "swarms" mimic natural behaviors, assigning distinct perspectives to multiple agents to collectively solve problems, often paired with Knowledge Graphs to ensure fact-oriented outputs.

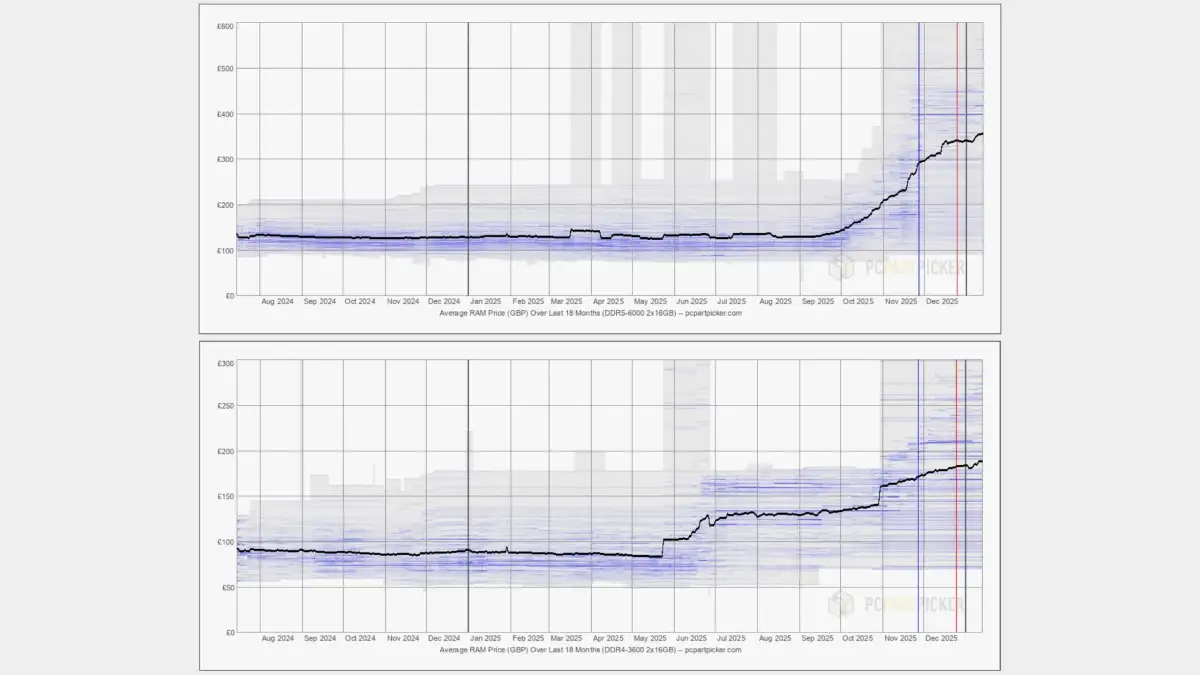

Read More: AI Demand Raises Computer Part Prices for Consumers

Analytical Reflection

This pivot reflects a broader postmodern fatigue with the 'bigger is better' narrative. As organizations integrate these tools into existing infrastructure—whether via Microsoft's Azure AI Foundry or custom AWS implementations—the focus remains on mitigating the inherent volatility of LLMs.

Evaluation is no longer an afterthought. Techniques like Red & Blue Team Dual-Model Evaluation are becoming standard, forcing developers to confront the reality that models are essentially "few-shot learners" requiring constant oversight. Reliability, in this context, is achieved by narrowing the scope of the model's autonomy and wrapping it in layers of cache, logic, and external verification.