Recent documentation reveals Snap's Lens Studio is developing more detailed control over hand tracking, allowing for intricate manipulation and visualization of virtual elements tied to specific hand joints. This points towards a move beyond basic gesture recognition to a more precise, programmatic interaction model for AR.

The HandVisuals interface, detailed within the Lens Scripting API, outlines a comprehensive structure for accessing and manipulating various parts of a tracked hand. Developers can now reference SceneObject components for individual finger joints – from the thumbBaseJoint to the pinkyTip – and the middleKnuckle to the indexUpperJoint. This level of detail enables fine-grained control over how virtual objects are positioned and animated in relation to a user's hand. The interface also includes references to RenderMeshVisual for both full hand and optimized index/thumb models, suggesting options for visual representation and performance tuning.

Read More: New MCP Server Offers Clear AI Rules for Compliance

Furthermore, the PinchDetectorConfig type alias introduces specific configurations for pinch gestures. This includes settings like pinchDetectionSelection and pinchDownThreshold, which likely control the sensitivity and specificity of how pinch actions are recognized. The ability to track isTracked status and handle onHandLost events points to robust gesture detection systems designed to provide developers with reliable feedback on hand presence and interaction states.

Scripting Underpins Interactivity

The integration of these detailed hand tracking features is facilitated by Snap's robust scripting capabilities within Lens Studio. The 'Script Component' serves as the primary mechanism, binding 'Lens Events' to custom code. Developers can define 'Script Properties' – variables that customize script behavior – and access them using the script keyword. This system allows for the creation of dynamic AR experiences that respond not just to broad gestures, but to specific hand poses and movements.

Read More: Snap's LocalizationSystem Helps AR Apps Show Time Correctly for Global Users

The Technical Framework

Lens Studio's scripting environment supports both JavaScript and TypeScript. The // @input directive is fundamental for declaring input fields, which become members of the script object. These input fields can be of various types, including SceneObject, string, float, and array variations, as well as component and asset types. This flexibility allows developers to link script logic directly to scene elements and configurable parameters.

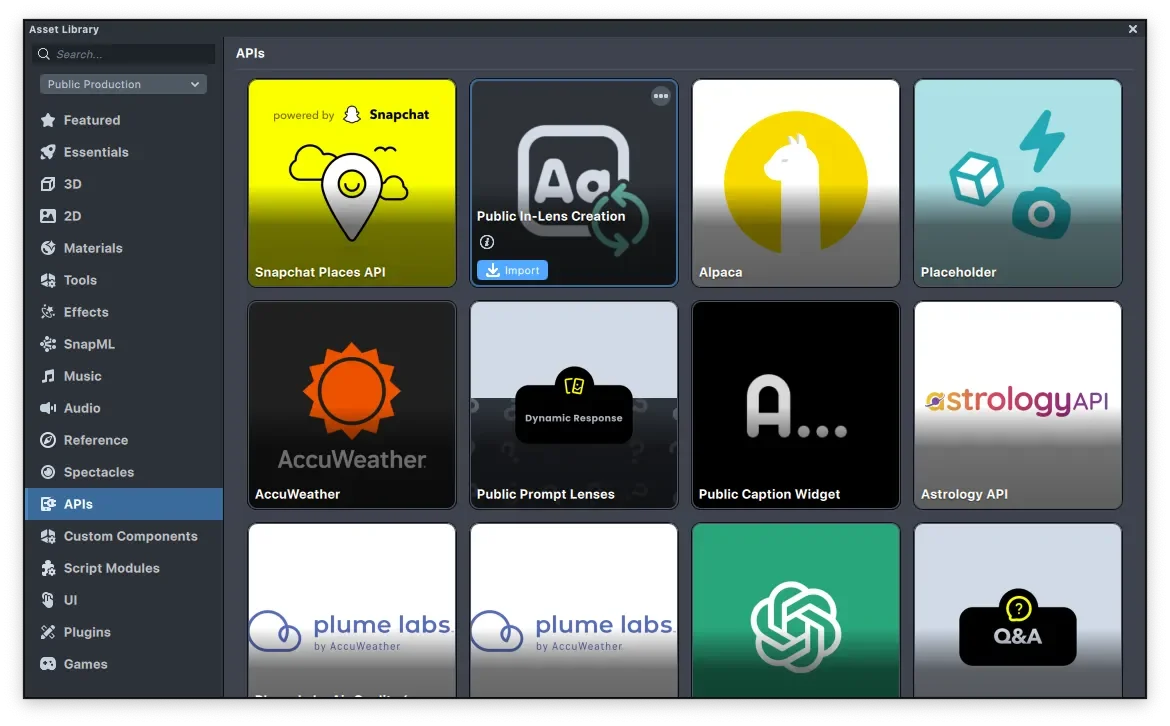

The platform also provides extensive API documentation, accessible through the 'Lens Scripting API' site and a 'Full API List'. This documentation outlines numerous classes and types, such as ObjectTracking3D and various gesture-related configurations, providing the building blocks for complex AR interactions. The availability of packages, like those found via a 'GitHub' repository for type definitions, further aids developers in building sophisticated applications.

A Shift in AR Interaction

Previously, AR interactions might have relied on simpler, less granular tracking methods. The depth of detail exposed by HandVisuals and PinchDetectorConfig suggests a strategic enhancement of Snap's AR capabilities, moving towards a future where virtual and real worlds can interact with a higher degree of precision and user-driven nuance. This granular control could unlock new possibilities for augmented reality applications, from gaming and social filters to more utilitarian interfaces.

Read More: 2026 People's Picks Awards reveal how people feel about the internet