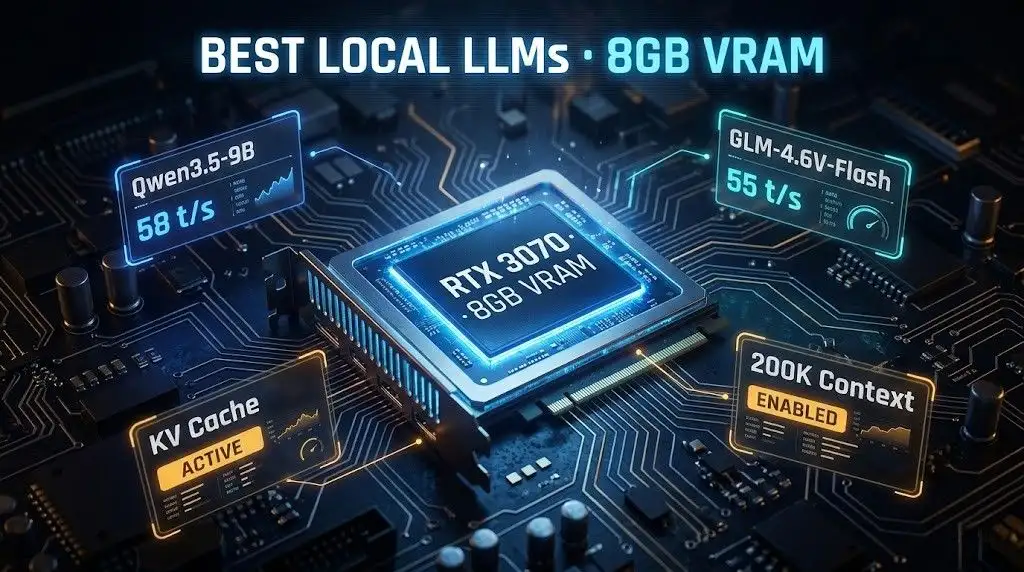

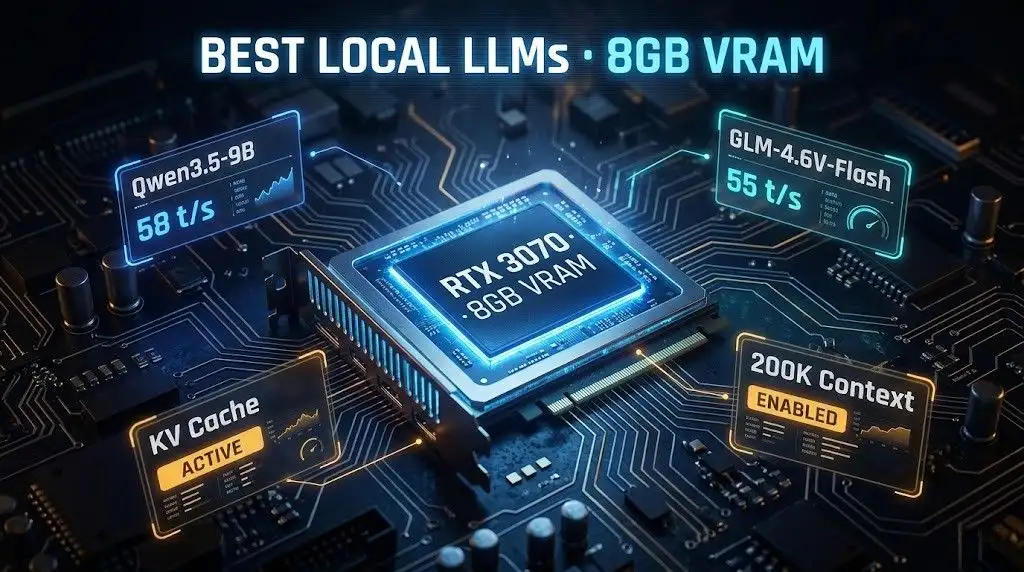

Recent reports highlight a persistent challenge for individuals seeking to run large language models (LLMs) locally: the VRAM limitations of consumer-grade hardware. Specifically, GPUs equipped with 8GB of VRAM present a bottleneck, forcing a recalibration of expectations and a reliance on optimization techniques.

The primary constraint for running LLMs on 8GB VRAM GPUs is the memory required to store model weights and the KV cache, which scales with model size and quantization level. Full loading of larger models, even those with fewer than 10 billion parameters, often proves impossible, necessitating strategies like partial offloading or the use of heavily quantized models.

Quantization and Offloading: The Core Strategies

The drive to fit LLMs onto 8GB VRAM GPUs centers on two main tactics: quantization and partial offloading.

Quantization: This process reduces the precision of the model's weights, thereby decreasing their memory footprint. Lower bit quantizations, such as 4-bit (Q4), offer the most significant VRAM savings and are frequently recommended for 8GB cards, striking a balance between memory usage and output quality.

An 8 billion parameter model requires approximately 4GB for its weights when quantized to 4-bit.

Partial Offloading: When a model’s full parameters and KV cache exceed the available VRAM, some of its layers can be offloaded to system RAM (CPU). This hybrid approach, facilitated by tools like

llama.cpp(using-nglor--gpu-layers) andOllama(usingnum_gpu), allows larger models to run, albeit with a performance penalty.For instance, a 14 billion parameter model might have some of its 48 layers run on the GPU while the rest are processed by the CPU.

When the model and its associated caches exceed VRAM, performance can drop precipitously from potentially dozens of tokens per second to a mere 2-5 tokens per second.

Model Selection and Performance

The choice of LLM is directly dictated by the VRAM capacity.

For 8GB VRAM, models in the 7B to 8B parameter range, particularly when quantized, are generally considered the practical limit for comfortable operation.

Smaller models, such as 3B to 3.8B parameters, are more suited for 6GB VRAM setups, and even sub-2B models are recommended for 4GB cards.

Running larger models, like 34B parameter models, typically demands at least 16GB of VRAM, while 70B models require aggressive quantization and significant VRAM.

Practical performance tests indicate that 7B models on 8GB VRAM can achieve speeds of 60-90+ tokens per second with sufficient VRAM allocation. However, performance metrics can vary significantly based on the specific model, quantization method, and inference engine used.

Read More: CodeInspector tool automates student code grading as of May 2026

Notable Models for 8GB VRAM

Several models have been identified as suitable for this hardware tier:

Qwen 1.5/2.5 7B (Quantized)

Orca-Mini 7B (Quantized)

DeepSeek R1 7B/8B (Quantized)

Deepseek-coder-v2 6.7B (Quantized)

Gemma 7B (Quantized)

Benchmarking and Tools

Frameworks like

Ollamaoffer simplified model management and GPU offloading, automatically moving excess layers to system RAM.llama.cppprovides more granular control over GPU layer offloading, enabling fine-tuning of partial offloading strategies.The KV cache is a significant factor in VRAM usage during inference, and its optimization, including KV cache quantization, is an area of ongoing development for VRAM efficiency.

Background Context: The LLM Landscape

The proliferation of LLMs has outpaced the widespread availability of high-end consumer hardware. This has created a segment of users interested in running these powerful tools offline for privacy, cost, or customization reasons. The drive to optimize LLM inference on lower-specification machines is a direct response to this demand.

VRAM bottlenecks are identified as the primary obstacle, affecting both model weight storage and intermediate computations during inference.

Memory bandwidth remains a critical factor as model sizes continue to increase.

Integrated GPUs (iGPUs), which utilize system RAM, also face VRAM constraints, though often with lower baseline performance compared to discrete GPUs.

The choice between a purely CPU-based approach, a fully GPU-bound execution, or a hybrid CPU+GPU method is crucial for managing performance and VRAM limitations. Running with low GPU usage in a "spill scenario" (where VRAM is exceeded) can, in some cases, be worse than relying solely on the CPU.