LATEST: User Experience Grinds to a Halt

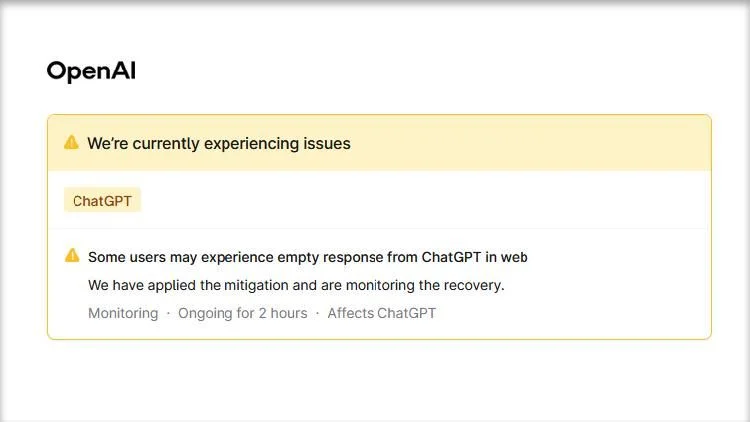

OpenAI's core API infrastructure experienced a significant disruption on April 6th, leading to widespread issues for users relying on its services. The /v1/responses API interface became non-functional around 18:07 UTC+8. While the company claimed the problem was "largely mitigated" by 18:23 UTC+8, reports suggest continued intermittent failures and degraded performance across various regions.

The incident saw users reporting empty responses from ChatGPT and error messages when attempting to access API functionalities, including the recently introduced 'gpt-4o' and 'gpt-4.1-mini' models. This outage marks a recurring pattern of service instability for OpenAI throughout the year, following a more extensive disruption in February that impacted a substantial user base.

The service interruption manifested in multiple ways, frustrating developers and end-users alike. Customers reported receiving empty outputs from ChatGPT's web interface, despite the system indicating a response. For those integrated with the API, common errors included "Server not responding" and specific issues with streaming runs. The problems were not confined to a single region, with user reports of failures originating from diverse locations including Selangor, Malaysia, and West Java, Indonesia.

A Pattern of Instability?

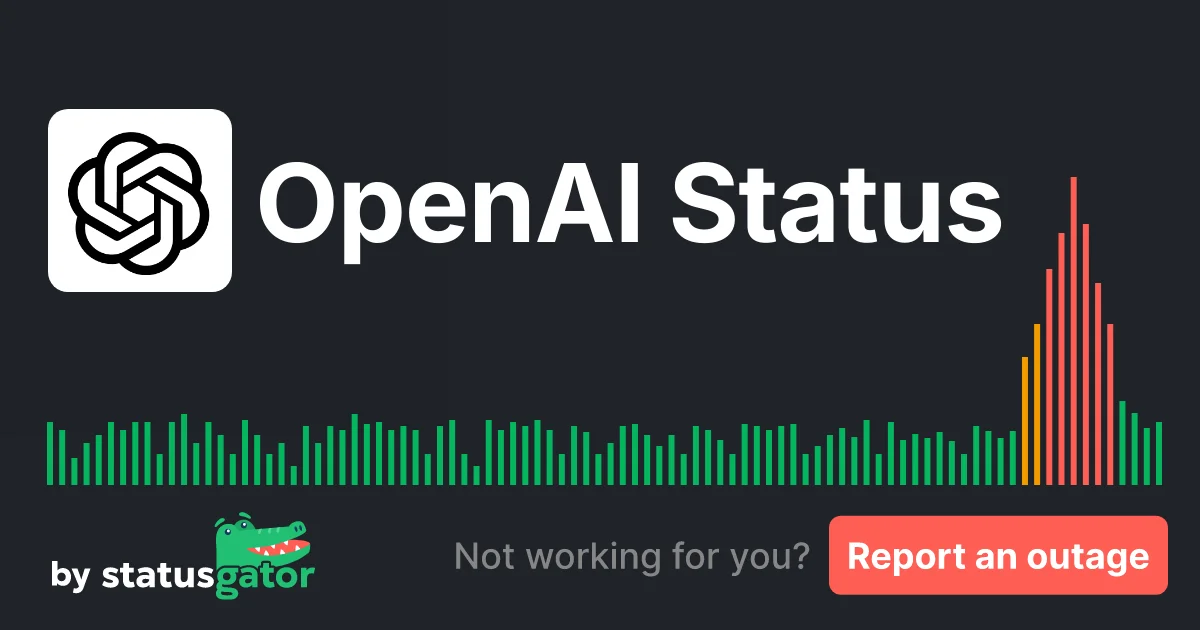

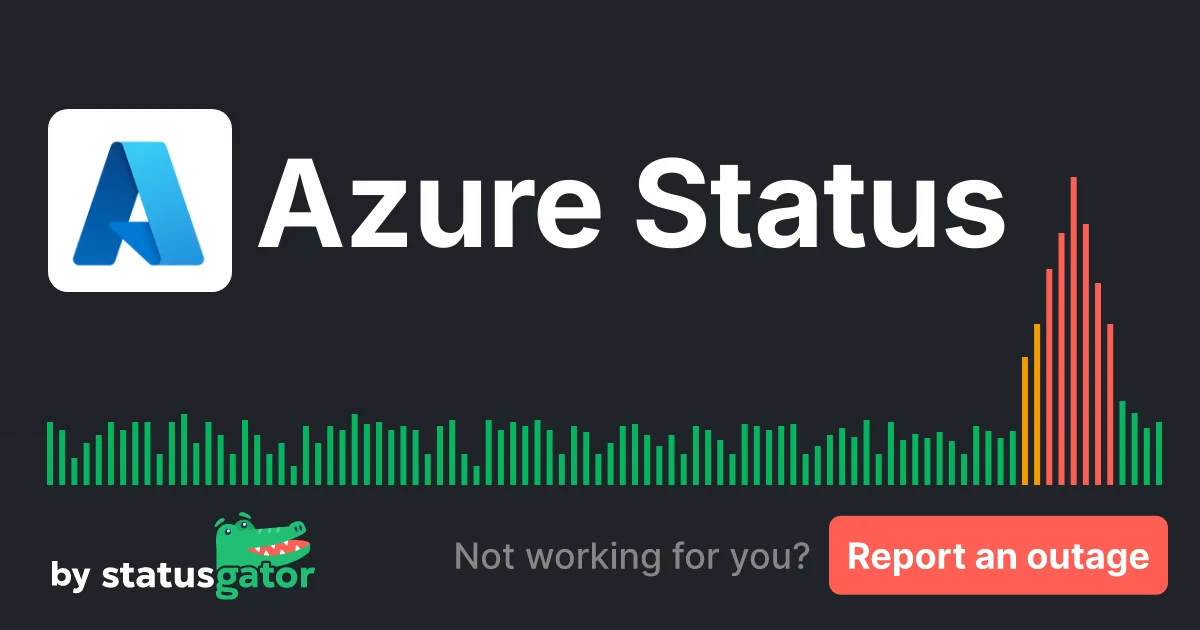

This latest API disruption adds to a growing list of operational hiccups for the AI giant. Beyond the April 6th incident, multiple sources point to ongoing, albeit less severe, performance issues. Status monitoring sites, such as StatusGator, have logged intermittent server non-responsiveness and connectivity problems affecting various OpenAI services over extended periods. These issues appear to span different product lines, including ChatGPT, Codex, and Azure OpenAI Service.

JUST IN: The Ever-Expanding OpenAI Ecosystem

The instability comes at a time when OpenAI continues to rapidly expand its model offerings. Recent additions to the API include the 'gpt-4.1', 'gpt-4.1-mini', and 'gpt-4.1-nano' variants, alongside the 'gpt-image-1' model. Furthermore, the introduction of the 'GPT-5' family of models, including 'gpt-5', 'gpt-5-mini', and 'gpt-5-nano', as well as specific versions like 'gpt-5.4 mini' and 'gpt-5.4 nano', indicates a relentless pace of development and deployment. These newer models are touted for enhanced capabilities in areas like coding, professional workflows, and specialized tasks.

UPDATE: Market Undercurrents and Corporate Messaging

Amidst these technical challenges, OpenAI also continues its outreach on broader policy fronts, advocating for discussions on ensuring AI's benefit for all. This strategic communication occurs against a backdrop of general market volatility, with discussions on platforms like Binance Square reflecting anxieties around global economic shifts and geopolitical events. While corporate messaging focuses on long-term benefits and policy, the recurring API disruptions underscore the fragility of the underlying infrastructure supporting these advanced AI systems. The disparity between ambitious development announcements and the frequent need to address service degradations creates a complex narrative around OpenAI's operational reliability.