Institutions are tightening the leash on machine-mimicry in the classroom as data suggests a jagged trade-off between speed and brain-function. Sharanappa Halse, Vice-Chancellor of Karnataka State Open University (KSOU), recently warned that research must remain a product of human labor rather than software-fed loops.

"Students should prepare their research work through their own hard work… instead of excessively depending on AI tools." — Sharanappa Halse

The University Grants Commission (UGC) has responded to this shift by inserting Research Ethics as a mandatory subject for scholars. While 345 students joined research tracks at KSOU this year, the administrative push reflects a broader fear: the "cognitive paradox" where software handles the heavy lifting, leaving the student’s own mental muscles to atrophy.

The Cost of Convenience

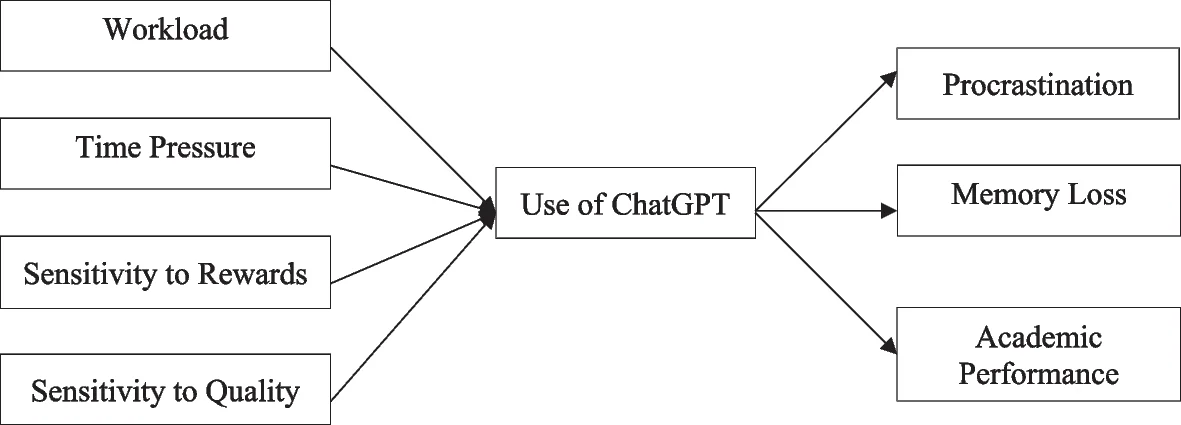

Recent investigations into the student-machine relationship reveal that frequent users of tools like ChatGPT report memory loss and diminished academic performance. The friction required for learning is being smoothed over by algorithms, leading to a state where students "rely on rather than learn from" the systems.

Read More: DB College Student Sabari Blue Funds Media Degree With Café Job, Publishes Poetry

Cognitive Load: While software can lower the "overload" of data, it often kills active engagement. If the machine organizes the thoughts, the student fails to develop self-regulation.

Memory Gaps: A study in the International Journal of Educational Technology found a direct link between high-frequency AI use and memory impairment.

The Mastery Divide: There is a thin line between using software to augment knowledge (a mastery approach) and using it to bypass the struggle of understanding.

Divergent Perspectives on "Integrity"

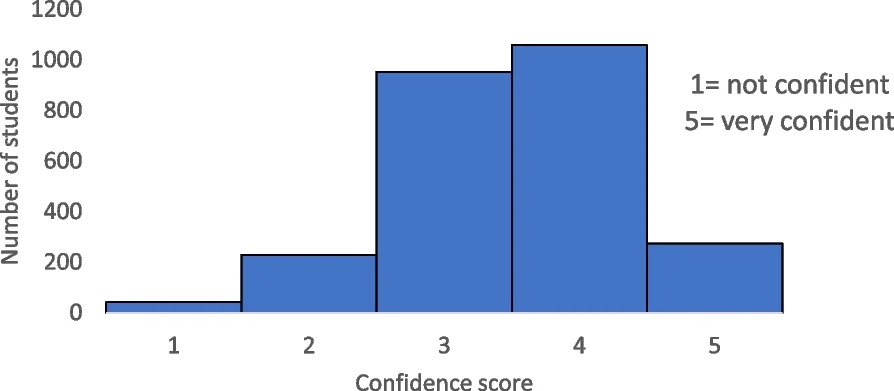

Students and faculty do not see the machine through the same lens. Survey data shows a fractured consensus on what constitutes "cheating" versus "assistance."

| Use Case | Student Acceptance | Institutional Stance |

|---|---|---|

| Grammar Correction | High | Generally Allowed |

| Research Sourcing | Moderate | Cautioned/Regulated |

| Essay Drafting | Low | Prohibited/Flagged |

| Critical Analysis | Low | High Risk of Erosion |

Current academic integrity reporting is struggling to keep up with the asymmetrical ways students hide or disclose their use of these tools. Some students use the software to "construct knowledge," while others use it to generate a ghost-written facade that lacks the weight of actual critical thinking.

The Well-being Factor and Inequality

Beyond the grades, the digital dependency is leaking into the well-being of the academic community. The rise of machine-assisted learning is exacerbating educational inequality, as access to high-tier tools creates a gap between those who can afford "cognitive shortcuts" and those who cannot.

Privacy leaks and data security remain "underexplored" threats in the rush to adopt these systems.

Psychological impacts include a potential loss of autonomy; when the machine suggests every word, the "human voice" becomes a hollow echo of a training set.

Background: The Institutional Pivot

The shift toward teaching "Research Ethics" is a reactive move by bodies like the UGC. For decades, "hard work" was an assumed part of the degree-seeking ritual. The sudden availability of generative models has turned the "process" of research into a commodity that can be purchased or prompted. Educators are now forced to define what "original thought" actually looks like in a world where the easy button is always visible.

Read More: Google AI Hub in Vizag Faces Legal Challenge from Opposition YSRCP