Instagram is introducing a new feature that will alert parents if their teenage children repeatedly search for content related to suicide or self-harm on the platform. This change comes as Meta, Instagram's parent company, faces ongoing legal scrutiny regarding the impact of social media on young users' mental well-being. The feature is intended to inform parents about concerning search patterns and provide them with resources to support their children.

Ongoing Scrutiny and Platform Safety

Meta, the parent company of Instagram, is currently involved in multiple legal proceedings. A significant trial in Los Angeles is examining allegations that platforms like Instagram and YouTube are intentionally designed to addict young users. In light of this, Instagram is implementing new safety measures.

The new alerts are part of Meta's broader efforts to enhance safety features for young users.

This move follows questions posed to Meta CEO Mark Zuckerberg regarding the company's practices concerning its younger audience and efforts to increase engagement.

Feature Rollout and Functionality

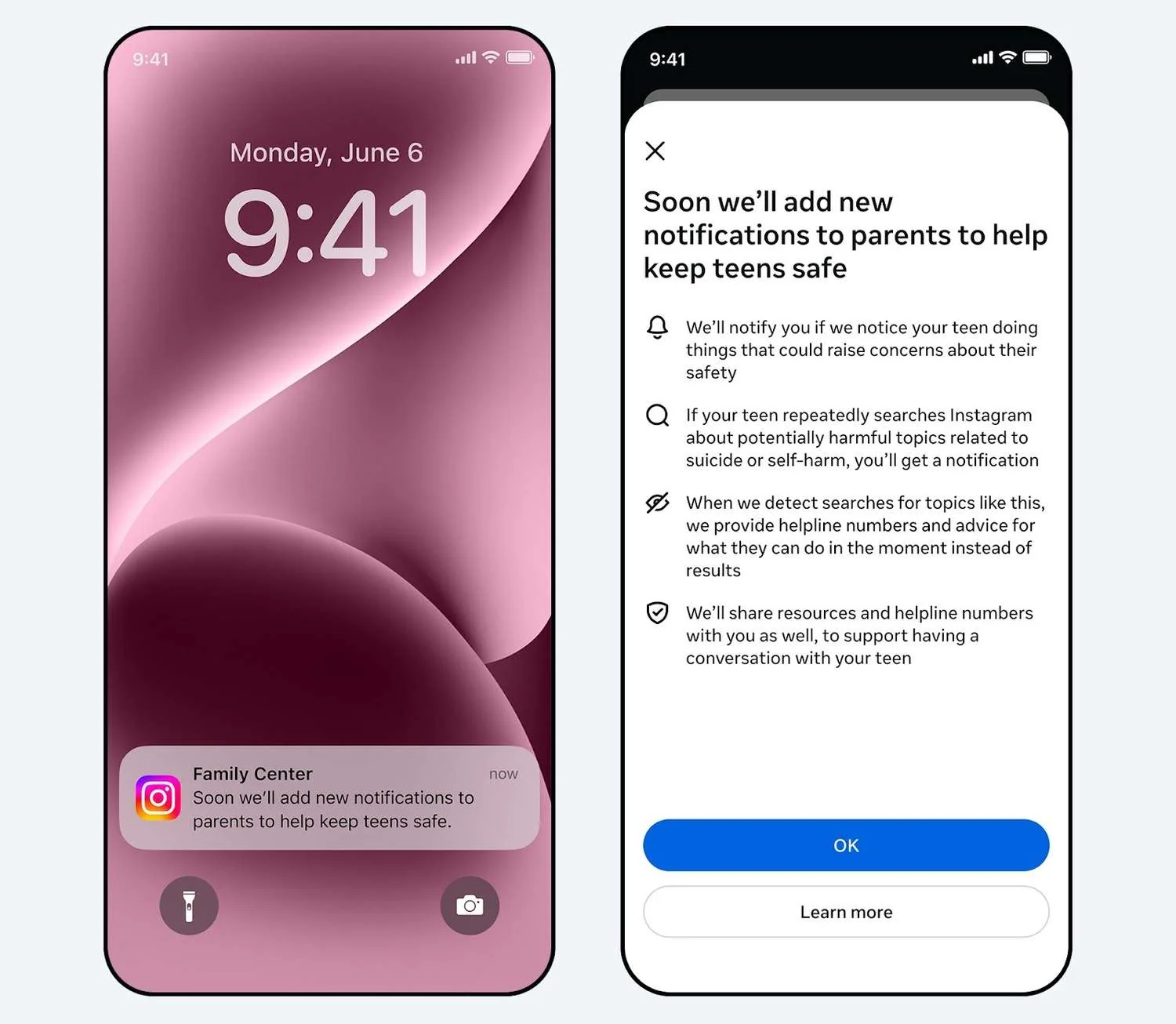

The new parental alert system will be introduced progressively. Parents and teens will need to be enrolled in Instagram's existing parental supervision tools for the alerts to be activated.

Read More: Viral TikTok Shows Heated Rivalry Actor's Character Pregnant, Actor Has 3 Kids

Triggering the Alert: Parents will receive a notification if a teen repeatedly searches for specific terms related to suicide or self-harm within a short timeframe.

Notification Method: Alerts will be delivered via email, text message, WhatsApp, or an in-app notification.

Content of Alert: The message will inform parents about their teen's search activity and offer guidance and resources for approaching sensitive conversations about mental health.

Geographic Rollout: The feature is set to begin rolling out next week in the U.S., U.K., Australia, and Canada, with plans for a wider global release later in the year.

Future AI Integration

Meta has stated that similar parental alerts are planned for its AI experiences in the future.

These alerts would notify guardians if a teen attempts to engage in conversations about suicide or self-harm with Meta's AI.

This development comes amidst growing concerns about AI chatbots potentially offering harmful mental health advice.

Expert and Advocate Perspectives

While the new feature aims to increase parental awareness, some individuals and organizations have expressed reservations.

Supportive Aspect: The alerts are designed to equip parents with information and resources to support their children.

Criticism: Ian Russell, father of Molly Russell and founder of the Molly Rose Foundation, has voiced skepticism. He has described the plan as "clumsy" and "fraught with risk," suggesting it could cause parental panic.

Advocacy Concerns: Russell believes Meta should first address its algorithms, which he claims may still recommend harmful content, before relying on parental alerts to shift responsibility.

Meta's Defense: Meta has contested claims that its current safety measures are insufficient in limiting teenagers' exposure to harmful content on the app.

Evidence

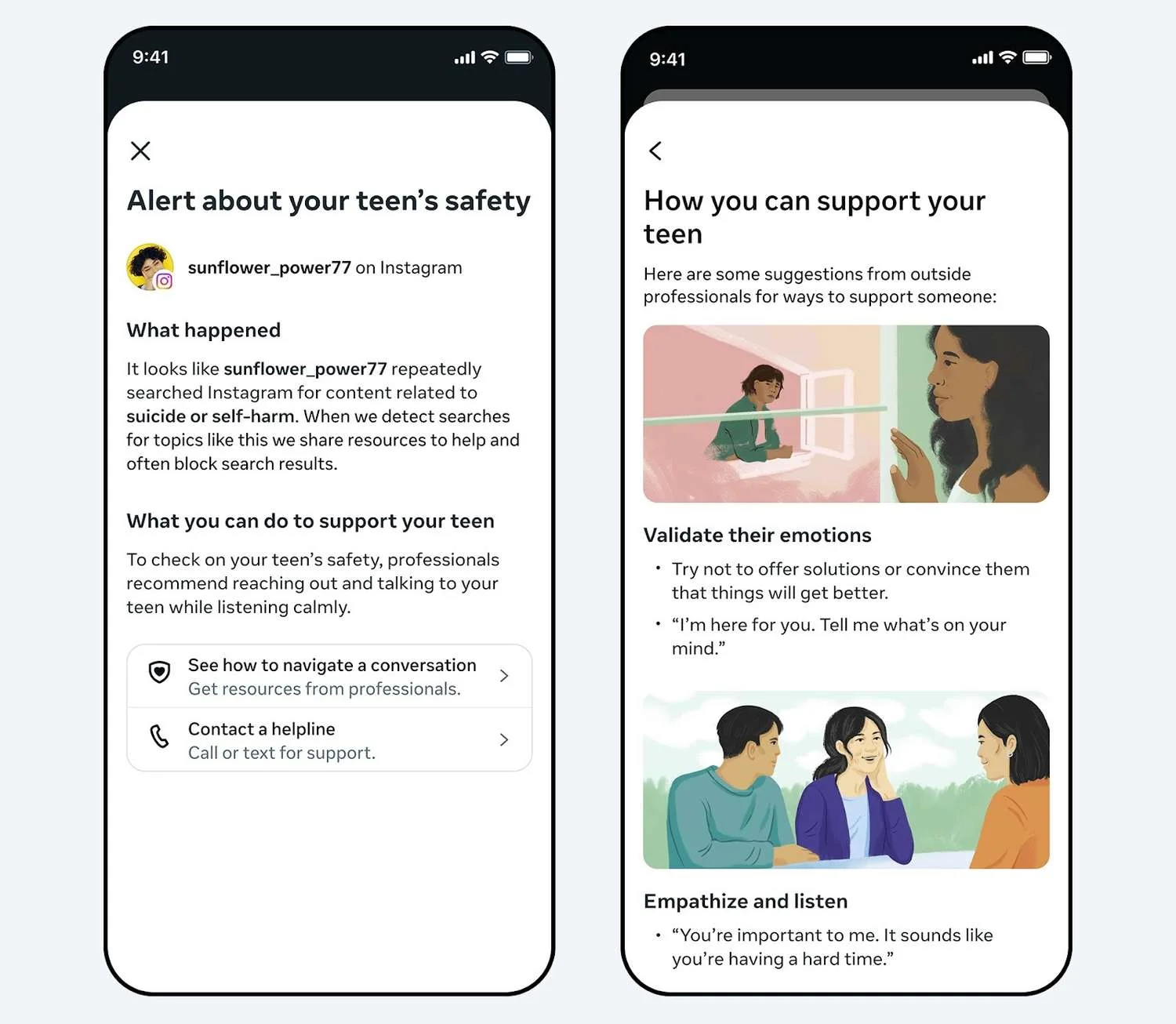

Instagram's Stated Policy: Instagram's policy currently involves blocking users from searching for suicide and self-harm content and directing them to external resources.

Parental Supervision Enrollment: The alert system requires both parents and teens to be enrolled in Instagram's parental supervision tools.

Alert Trigger: Alerts are activated by "repeatedly" searching for terms related to suicide or self-harm within a "short time span." The exact threshold for these searches has not been publicly specified by Meta, though they state it "errs on the side of caution."

Future AI Alerts: Meta plans to extend similar alerts to interactions with its AI chatbots concerning suicide or self-harm.

Legal Context: The feature is being introduced during ongoing trials that accuse Meta and other tech companies of designing platforms to be addictive to young users.

Parental Guidance and Resources

When parents receive an alert, they will be presented with information designed to help them initiate conversations with their children.

The notifications will include expert-backed advice and resources.

These materials are intended to assist parents in navigating sensitive discussions about mental health.

Parents are also advised to consult with their child's healthcare provider for additional support.

Context of Legal Challenges

Instagram's announcement occurs within a significant legal battle.

A group of over 1,600 plaintiffs, including families and school districts, are suing Instagram, YouTube, TikTok, and Snap.

They allege that these platforms are deliberately engineered to be addictive for young users.

During court appearances, Meta CEO Mark Zuckerberg expressed a wish that the company had acted sooner to identify underage users and improve safety measures.

Conclusion

Instagram's new feature aims to provide parents with an early warning system regarding potential distress signals from their teenage children based on their search activity. The company asserts this measure is an enhancement to its existing safety protocols, including content blocking and directing users to support resources. However, the effectiveness and potential impact of these alerts are subjects of debate, with some advocates expressing concern about parental preparedness and Meta's broader responsibility for algorithmic content recommendation. The rollout of these alerts, alongside planned AI integrations, signals Meta's continued efforts to navigate the complex landscape of child safety on its platforms amidst intense legal and public scrutiny.

Read More: California Woman Claims Instagram, YouTube Harmed Her Childhood Mental Health in Meta Trial

Sources Used:

CNBC: https://www.cnbc.com/2026/02/26/instagram-parent-alerts-teen-suicide-meta-trial.html

Summary of how the alert works, parental supervision requirement, future AI alerts, and ongoing trials.

CBS News: https://www.cbsnews.com/news/instagram-meta-teen-safety-suicide-self-harm-safeguards/

Details on alert triggers, delivery methods, and the context of Zuckerberg's questioning.

TechCrunch: https://techcrunch.com/2026/02/26/instagram-now-alerts-parents-if-their-teen-searches-for-suicide-or-self-harm-content/

Confirmation of the feature's launch timing and its role alongside existing content blocking.

Parents.com: https://www.parents.com/instagram-alerts-for-suicide-and-self-harm-11914752

Focus on the expert-backed advice accompanying the alerts for parents.

The Verge: https://www.theverge.com/tech/885110/meta-instagram-parent-alert-teen-self-harm-suicide

Highlights the "repeatedly" aspect of searches and the upcoming AI chatbot alerts.

Details on the rollout regions and a direct quote of skepticism from Molly Russell's father.

Meta Newsroom: https://about.fb.com/news/2026/02/new-meta-alerts-let-parents-know-if-teen-may-need-support/

Official announcement with details on alert triggers and future AI integration.

RTE.ie: https://www.rte.ie/news/ireland/2026/0226/1560546-instagram-suicide-terms/

Reinforces the repeated search criteria and the ongoing safety feature development.

Forbes: https://www.forbes.com/sites/maryroeloffs/2026/02/26/instagram-will-alert-parents-if-teens-search-for-suicide-self-harm-content/

Includes detailed criticism from the Molly Rose Foundation and the company's stance on its algorithms.

NBC News: https://www.nbcnews.com/tech/social-media/instagram-will-alert-parents-teens-repeated-suicidal-self-harm-searche-rcna260789

Confirms the alert mechanism and mentions the AI tool conversations and ongoing legal actions.

9to5Mac: https://9to5mac.com/2026/02/26/instagram-will-notify-parents-if-their-child-searches-for-self-harm-content/

Mentions Zuckerberg's court testimony about acting sooner and the use of available contact information for parents.

The Beat DFW: https://thebeatdfw.com/4520793/instagram-to-alert-parents-about-teens-self-harm-searches/

Emphasizes that this is a proactive notification and lists the initial rollout regions.