Digital interaction is undergoing a structural migration: autonomous AI agents are bypassing traditional graphical user interfaces to interact directly with backend API gateways. As of May 17, 2026, the primacy of the browser-based "storefront" is declining, replaced by the necessity of machine-readable data architecture.

The Mechanism of Machine Interaction

Software developers are shifting focus from aesthetic user portals to robust OpenAPI specifications. Because AI agents do not perceive color, layout, or branding, they consume documentation and endpoint schemas as their primary logic flow.

Semantic Precision: Agents rely on precise definitions within an API schema. If a value proposition is not parseable in milliseconds, the agent will move to a more readable endpoint.

Structural Standardization: Tools like the Model Context Protocol (MCP) are being deployed to normalize how agents discover and execute tools. By mapping API routes to MCP tools, developers provide a standardized path for agents to perform commerce or data retrieval.

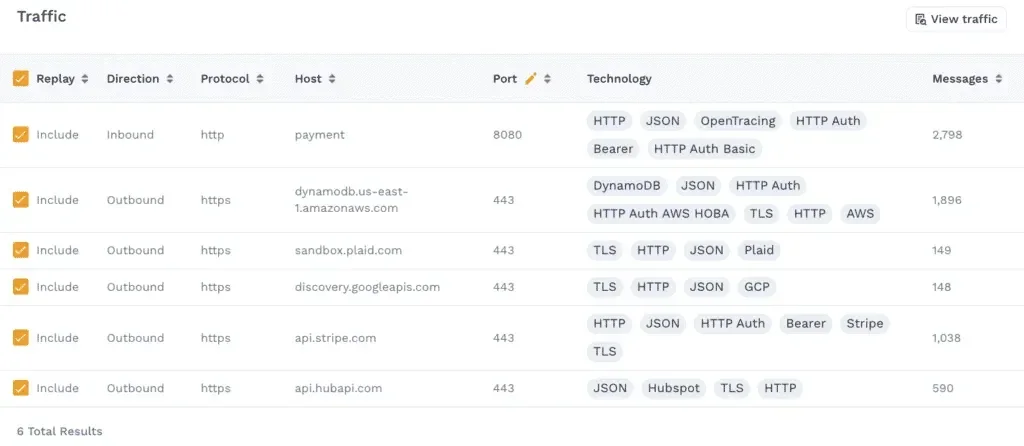

Identity Verification: Unlike legacy bot-filtering, which relies on simple

robots.txtor User-Agent headers, modern infrastructure requires cryptographically verified agent identity to differentiate between benign utility and malicious data scraping.

| Strategy | Legacy Web Approach | Agentic Web Approach |

|---|---|---|

| User Interface | Human-Centric (Visual) | Data-Centric (Structured) |

| Conversion | Marketing Copy / CTA | API Payload / Endpoint Utility |

| Bot Management | Rate Limiting / CAPTCHA | Identity Token / Cryptographic Auth |

The "Workflow" Paradigm

The contemporary view among systems architects is that websites are rapidly becoming execution workflows rather than destination hubs.

"Your customers won’t visit your site. Data Architecture Audit—your product catalog is your new storefront." — Reflective analysis on the Agentic Web

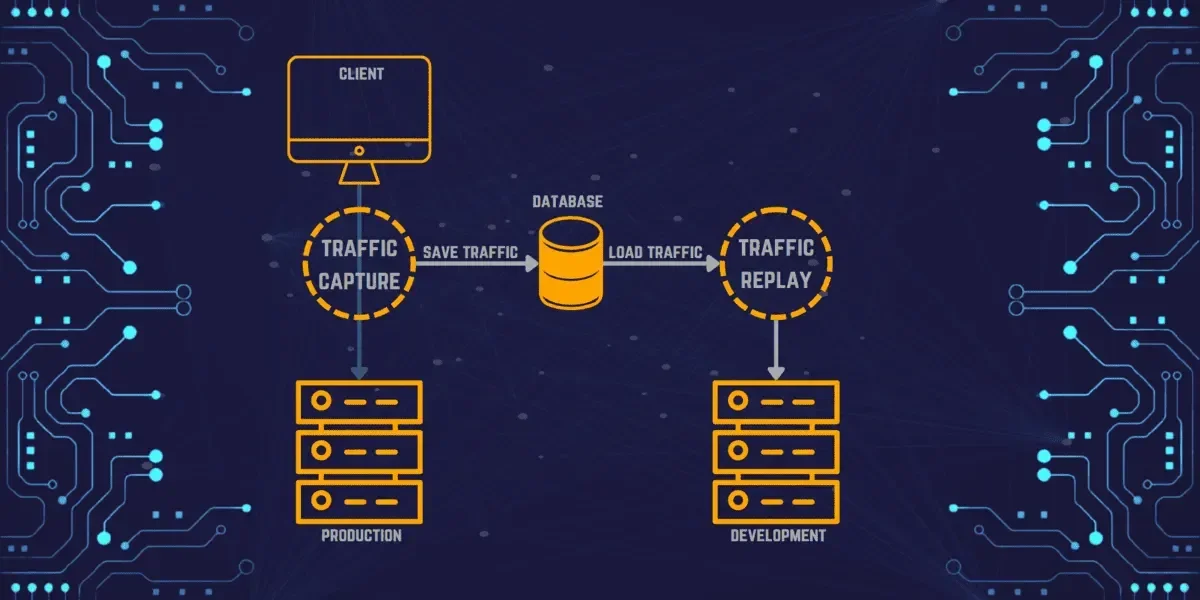

For firms like Speedscale and Superface.ai, this represents an escalation in operational stress. AI agents do not merely "read" content; they utilize APIs to perform actions, test system integrity, and automate procurement. This exposes internal infrastructure to automated load and edge-case testing at scales that legacy human traffic rarely achieved.

Read More: New LLMs Can Now Use Text, Images, and Sound Together

Observability and Defensive Measures

As these autonomous actors become pervasive, the industry is standardizing on observability platforms. New tooling focuses on monitoring agentic traffic patterns to ensure that automated "shoppers" or "workers" do not degrade backend stability.

Credential Verification: Implementation of

agent_tokenverification to ensure automated actors are identifiable.Structured Discovery: Providing machine-readable discovery mechanisms is now mandatory for platforms that wish to remain within the discovery funnel of AI shopping assistants.

Compliance: While some entities (like OpenAI) signal compliance with standard exclusion files, the diversity of independent agents (e.g., DeepSeekBot, Crawl4AI) necessitates a more rigorous, code-based enforcement of access controls.

The trajectory suggests that for an enterprise, the "front door" is no longer a landing page, but the documentation provided to a model's context window. Organizations failing to standardize their data architecture for machine parsing risk systemic exclusion from the emerging automated marketplace.