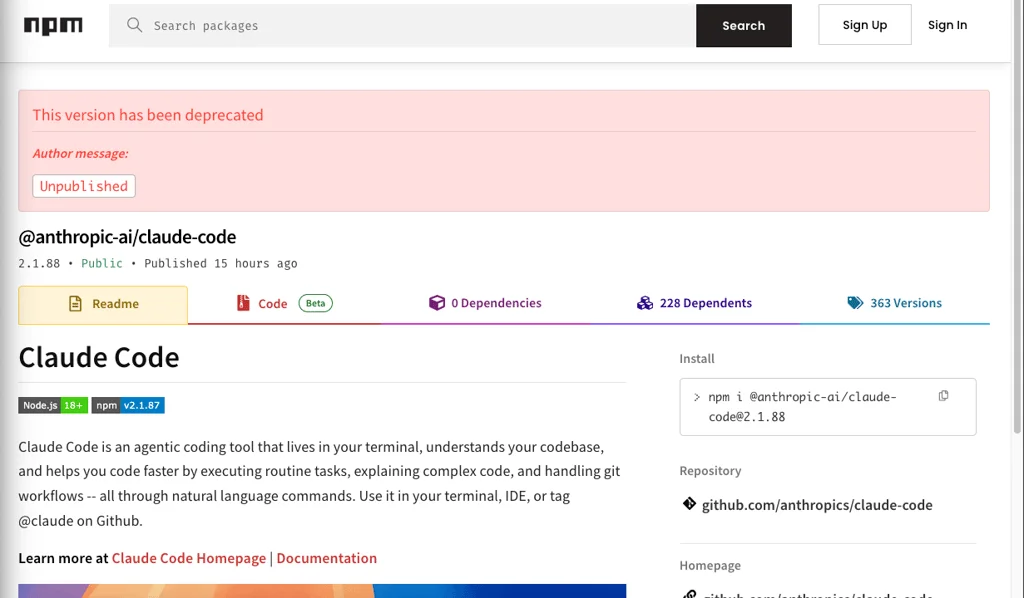

Anthropic, a prominent player in the artificial intelligence arena, has again found itself in the spotlight due to an accidental leak of internal source code for its coding assistant, Claude Code. This incident marks the second significant data exposure from the company in less than a week, with the latest mishap involving the unintended release of over 512,000 lines of code through an official npm package.

The exposed material pertains specifically to Claude Code, a command-line interface (CLI) tool designed to aid developers, rather than the core AI models themselves. The leak occurred because a source map file, intended to connect bundled code back to its original source, contained unobfuscated TypeScript code. This oversight has effectively provided a roadmap into how Anthropic constructed its popular coding assistant, offering a potential advantage to competitors and software developers.

Read More: CodeInspector tool automates student code grading as of May 2026

Internal Details and Public Reaction

The leaked source code reportedly includes details about hidden features managed by 44 feature flags, further unveiling the inner workings of Claude Code. The implications of this exposure are being discussed within the industry, with reactions ranging from wry amusement to serious concern regarding Anthropic's security measures. Some have pointed to a simple misconfiguration in the package's deployment as the potential culprit, noting that a single error in .npmignore or package.json could lead to such broad disclosures.

Following the leak, multiple GitHub users have shared their own versions of Claude Code, some even repurposing the repository to host alternative implementations. One individual, citing potential legal liabilities, shifted their public repository from hosting the leaked Anthropic code to a Python port of Claude Code.

A Pattern of Lapses

This latest incident follows closely on the heels of another data blunder, where descriptions of Anthropic's upcoming AI model and other sensitive documents were found in a publicly accessible data cache. The recurrence of such events raises broader questions about the robustness of Anthropic's data handling and security practices.

Read More: AI Assistant Changes How It Answers Questions

The exposure of Claude Code's source code is significant because it collapses the guesswork involved in understanding a packaged CLI product, transforming it into a readable source tree. This offers rival developers direct insight into Anthropic's development process for this specific tool.

Context and Background

Anthropic has been navigating a complex landscape, recently experiencing a surge in downloads after a separation from the Pentagon. The company has also faced challenges related to competition from China and internal debates regarding its "safety obsession." Furthermore, Anthropic has been involved in legal disputes, including a lawsuit against the U.S. government following an "unprecedented national security designation." The current leak occurs amidst discussions of new Anthropic models potentially disrupting the cybersecurity sector.

Notably, it has been observed that Claude Code, in its released version, utilizes 'axios' as a dependency, a tool that has itself recently been compromised. This detail adds another layer of concern regarding the security ecosystem surrounding Anthropic's products.

Read More: RTX 5080 to Support AI Language Models with NVIDIA Riva NIM