Corporate Benchmarks Meet Fragmented Codebases

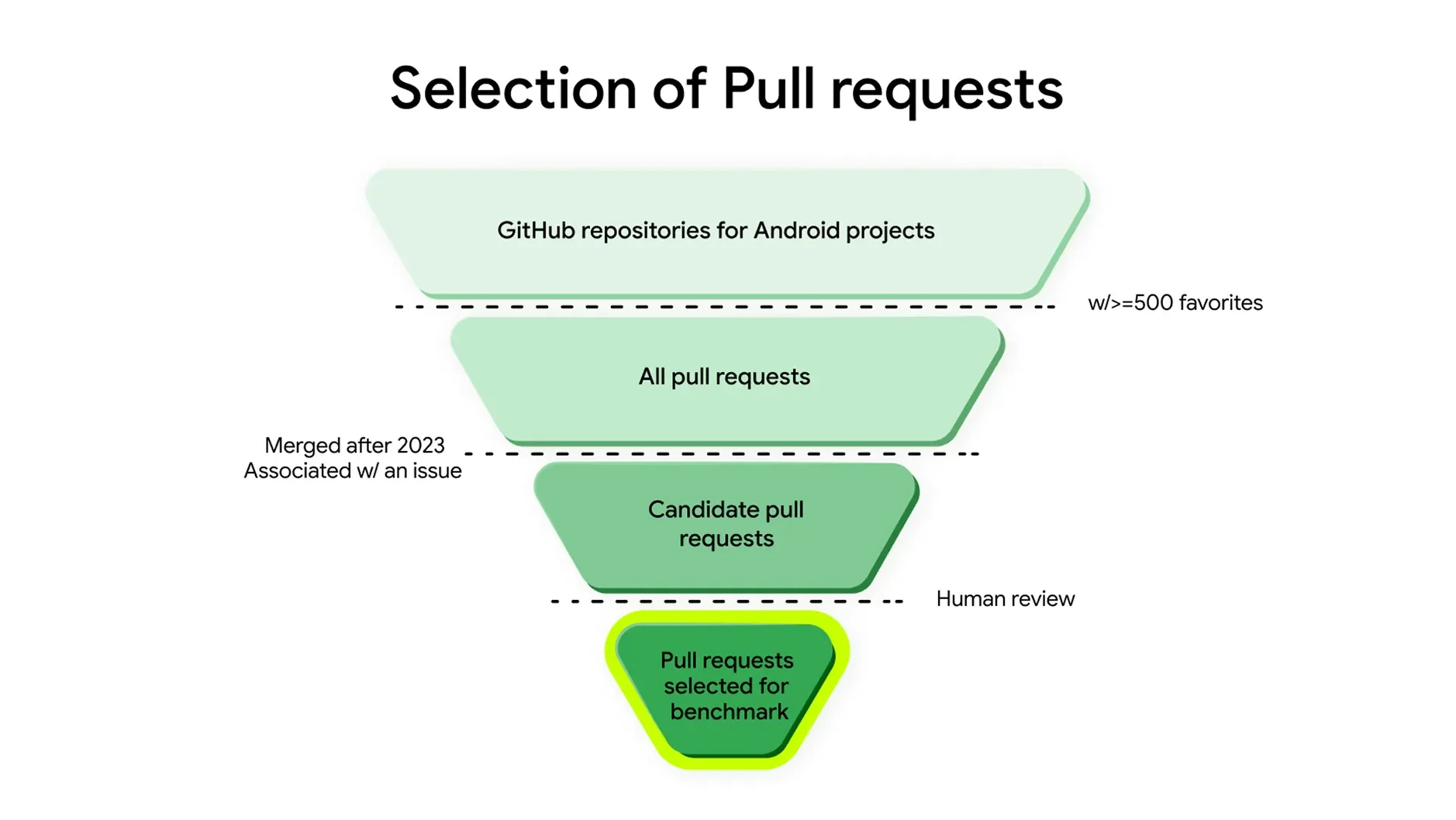

Google has released Android Bench, a technical filter designed to rank how Large Language Models (LLMs) handle the specific, often messy, labor of building mobile software. This framework uses real-world pull requests and bug reports harvested from GitHub to see if a model can actually repair a broken app or just spit out code that looks pretty but fails to run.

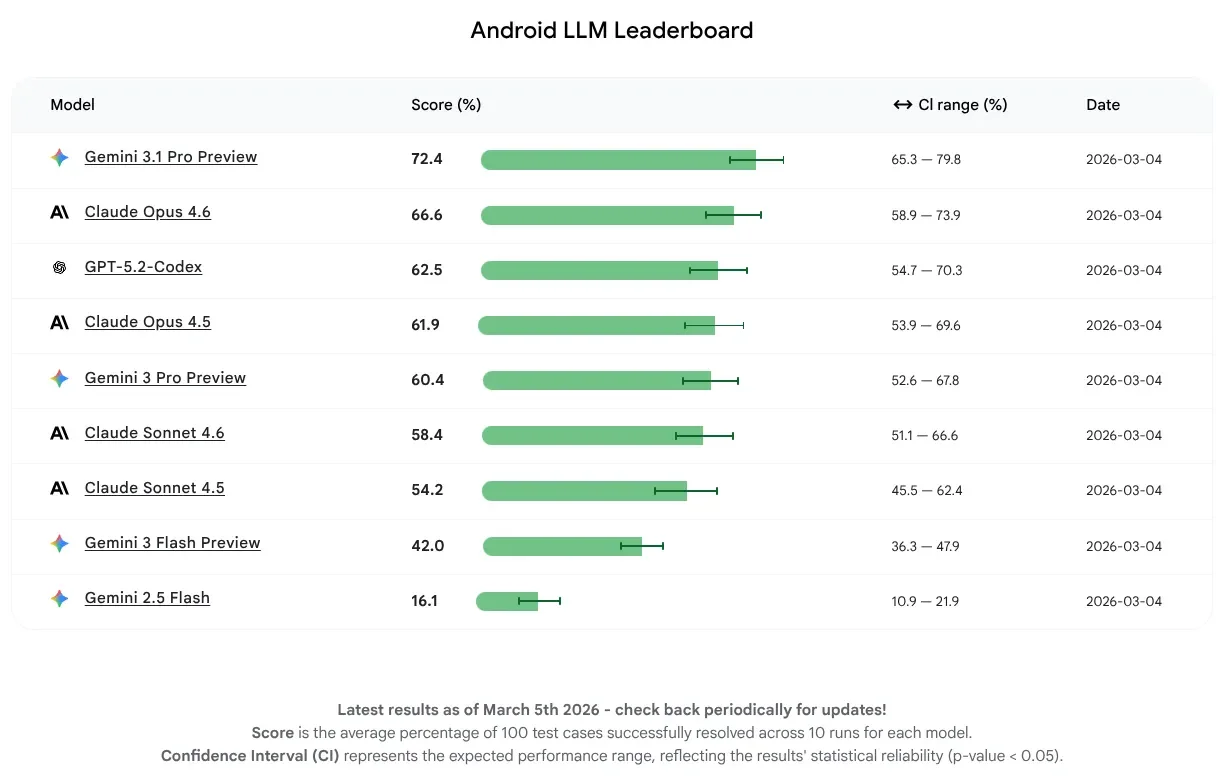

Gemini 3.1 Pro Preview currently holds the top spot, solving 72.4% of assigned tasks.

The test harness is a modified version of SWE-bench, focusing on the narrow, idiosyncratic walls of the Android ecosystem.

This shift moves away from generic coding tests toward Android-specific challenges that include resource management and battery drain issues.

The Hierarchy of Machine Logic

The gap between the highest-performing models and the budget "flash" variants is wide, revealing a steep price for speed in complex environments.

| AI Model | Success Rate (%) |

|---|---|

| Gemini 3.1 Pro Preview | 72.4% |

| Claude Opus 4.6 | 66.6% |

| GPT-5.2 Codex | 62.5% |

| Claude Opus 4.5 | 61.9% |

| Gemini 3 Pro Preview | 60.4% |

| Claude Sonnet 4.6 | 58.4% |

| Gemini 3 Flash Preview | 42.0% |

| Gemini 2.5 Flash | 16.1% |

Scouring GitHub for Truth

Instead of theoretical puzzles, the benchmark forces models to interact with public project histories. The AI must recreate actual pull requests—the digital paperwork of software fixes—to prove it understands the intent of a developer, not just the syntax of the language.

"There is a massive difference between a code snippet that looks right and one that actually functions within a complex app ecosystem."

The system verifies the results by running the code in a controlled environment to see if the reported bug actually vanishes. This method targets the "hallucination" problem where AI tools provide confident but broken solutions that fail under the weight of real-world Android development constraints.

Read More: OpenAI Trial: Elon Musk Claims Free Teslas Offered for Influence

The Home Field Advantage

While Google claims the benchmark is "model-agnostic," the reality remains that a Google-made benchmark, testing a Google-owned operating system, found a Google-built model to be the most proficient.

Critics note the inevitable alignment of incentives when the platform owner defines the "best practices" the models are graded against.

Claude and GPT models remain competitive, yet the "Flash" models—marketed for speed and low cost—fail significantly when tasked with the heavy lifting of Android's mechanical toil.

Skeletal Background

The introduction of Android Bench follows a year of Google aggressively stitching AI into its Android Studio workflow. The company is attempting to automate the "drudge work" of mobile development—those repetitive, lopsided tasks that drain time from human creators. By creating a leaderboard, Google is pressuring other LLM makers to optimize for their specific OS architecture, ensuring that the future of app building remains tethered to Google's proprietary definitions of "efficiency."