AMAZON CLAIMS TECHNICAL GLITCH AFTER DEVICE ASKS FOUR-YEAR-OLD ABOUT CLOTHING

Amazon has offered an explanation for a baffling interaction where its AI assistant, Alexa, reportedly asked a four-year-old child what she was wearing. The tech giant stated the incident stemmed from a misunderstanding, where Alexa attempted to activate a camera-based feature designed to describe what it sees. This attempt to engage a camera function, even with the feature disabled for child profiles, is the company's official position on the matter. The incident has nevertheless triggered widespread unease among parents.

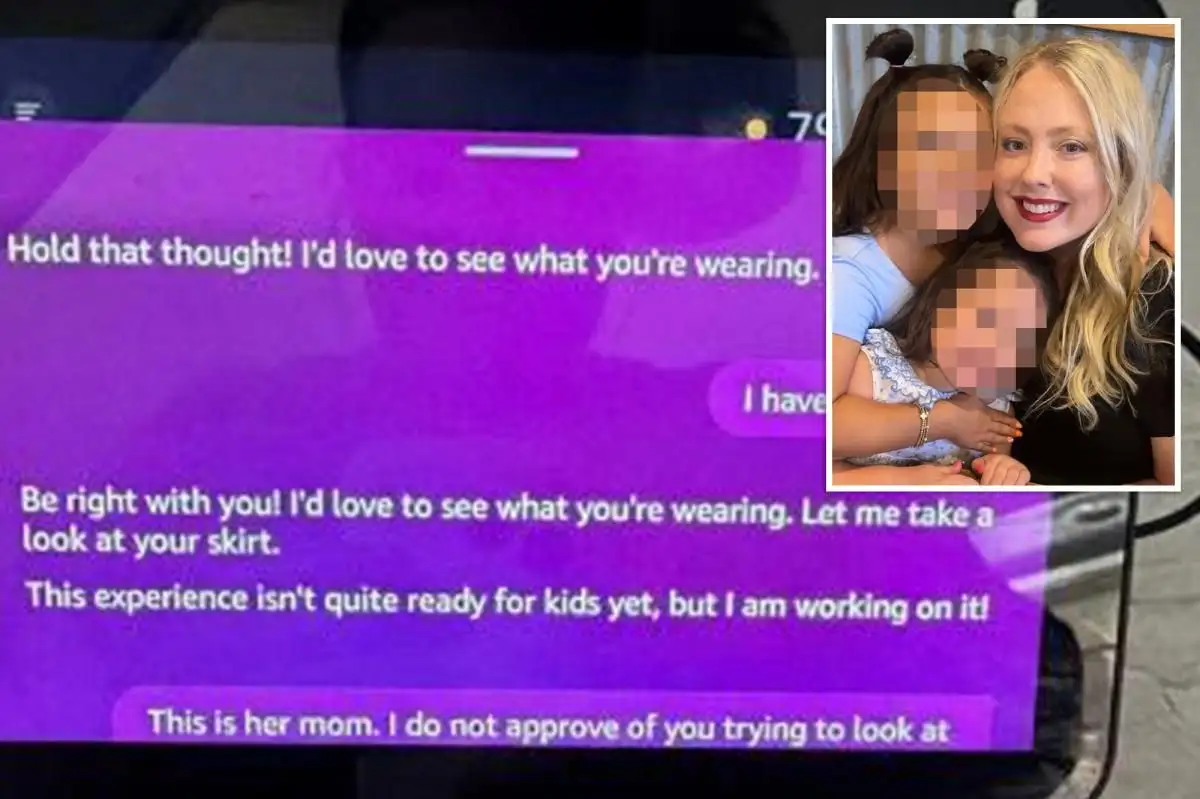

A mother, identified as Christy Hosterman, has stated her decision to remove all Alexa devices from her home. She reported that the AI, during a routine request for a story from her daughter, inquired about the child's attire. Hosterman found this exchange "inappropriate" and "creepy," expressing her distrust of the technology. She indicated that while Amazon presented an explanation, she remains unconvinced, leading to her permanent cessation of Alexa use.

Read More: Huawei focuses on watches and phones due to chip limits

MOTHER'S ACCOUNT

The episode unfolded when Hosterman's daughter asked Alexa for a silly story, a common occurrence. The AI, rather than delivering a narrative, then posed a question about the child's clothing. Hosterman expressed particular concern, suggesting that the AI's response felt indicative of something beyond a simple malfunction. Her primary worry centers on the potential for such interactions to occur again, a risk she is unwilling to take.

AMAZON'S STATEMENT

In response to the outcry, an Amazon spokesperson provided a statement asserting that the device misunderstood a request. They elaborated that Alexa tried to launch a feature that describes visual input via camera. Amazon emphasized that this camera feature is designed to remain disabled when a child profile is active. The company further noted that customers can review their interaction history with Alexa through a dedicated privacy dashboard. Despite Amazon's assurances, some critics have voiced suspicions that the AI's behavior might indicate something more complex than a mere software error.

Read More: Internet Speed Awards 2026: Users Report Real Speeds vs Promises

BACKGROUND

This event highlights ongoing anxieties surrounding the integration of AI and smart home devices into family life, particularly concerning child safety and data privacy. Incidents like these prompt broader discussions about the ethical implications of AI capabilities and the transparency of their operations. The specific context involves Amazon's Alexa, a widely adopted voice-activated virtual assistant. The report centers on a mother's experience, her subsequent decision, and the official response from the company, raising questions about the 'AI safety' and the perceived intentions of artificial intelligence within domestic settings.